Adaptive Conformal Prediction for Improving Factuality of Generations by Large Language Models

arXiv cs.CL / 4/16/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper addresses a key limitation of current conformal prediction methods for LLM factuality: they are often not prompt-adaptive, so uncertainty/calibration does not properly reflect input-dependent variability.

- It proposes an adaptive conformal prediction framework that extends conformal score transformation for LLMs, enabling prompt-dependent calibration while preserving marginal coverage guarantees.

- The method improves conditional coverage, particularly for long-form generation and multiple-choice question answering, where factuality risk varies with the prompt.

- It supports selective prediction by filtering unreliable claims or answer choices before downstream use.

- Experiments on multiple white-box LLMs and domains show significant gains over existing baselines in conditional coverage metrics.

Related Articles

The AI Hype Cycle Is Lying to You About What to Learn

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Inside NVIDIA’s $2B Marvell Deal: What NVLink Fusion Means for AI Ethernet Fabrics

Dev.to

Automating Your Literature Review: From PDFs to Data with AI

Dev.to

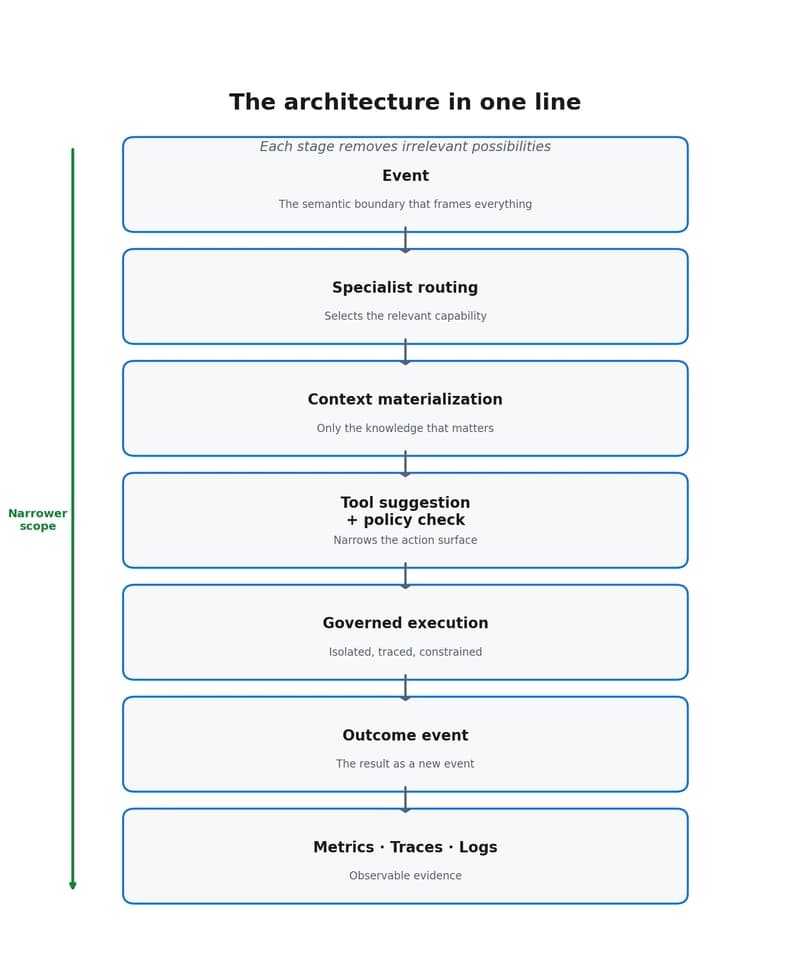

Why event-driven agents reduce scope, cost, and decision dispersion

Dev.to