Learning Equivariant Neural-Augmented Object Dynamics From Few Interactions

arXiv cs.RO / 5/5/2026

📰 NewsModels & Research

Key Points

- The paper addresses the challenge of learning data-efficient object dynamics for robotic manipulation, especially for deformable objects, where purely data-driven graph models struggle with long-horizon physical feasibility.

- It proposes PIEGraph, a hybrid framework that combines an analytical, physically informed spring–mass particle model with an equivariant graph neural network to learn motion from limited real-world interactions.

- The approach introduces a novel action representation that leverages symmetries in particle interactions to better guide the analytical component and improve generalization.

- Experiments in both simulation and on real robot hardware for reorientation and repositioning tasks across ropes, cloth, stuffed animals, and rigid objects show more accurate dynamics prediction and better planning performance than existing baselines.

- Overall, PIEGraph demonstrates that integrating physics constraints with equivariant GNN learning can reduce interaction data needs while maintaining reliable manipulation planning for long-horizon tasks.

Related Articles

Singapore's Fraud Frontier: Why AI Scam Detection Demands Regulatory Precision

Dev.to

From OOM to 262K Context: Running Qwen3-Coder 30B Locally on 8GB VRAM

Dev.to

Nano Banana Pro vs DALL-E 3 vs Midjourney: A Practical Comparison From Someone Who Actually Uses All Three

Dev.to

LLMs edited 86 human essays toward a semantic cluster not occupied by any human writer [D]

Reddit r/MachineLearning

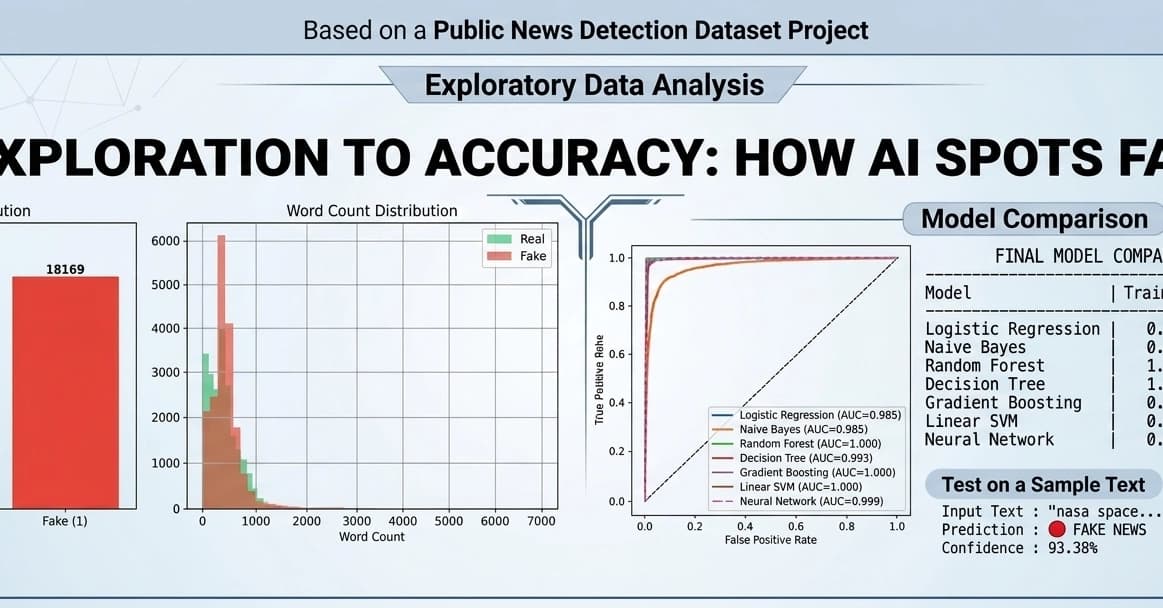

Fake News Detection using Machine Learning & NLP!

Dev.to