Robustness Analysis of POMDP Policies to Observation Perturbations

arXiv cs.AI / 4/25/2026

📰 NewsModels & Research

Key Points

- The paper examines how POMDP policies degrade when the deployed observation model deviates from the nominal model due to real-world effects like calibration drift and sensor degradation.

- It formalizes a “Policy Observation Robustness Problem” that computes the maximum tolerable observation-model deviation while ensuring the policy value stays above a chosen threshold.

- Two robustness variants are analyzed: a sticky deviation model (dependent on state/actions) and a non-sticky model (history-dependent), with the inner optimization shown to be monotonic in deviation size.

- The authors develop efficient solution methods by casting the problem as bi-level optimization and using root-finding in the outer loop; for non-sticky cases with finite-state controllers (FSCs), they show it suffices to consider observations tied to FSC nodes rather than full histories.

- They introduce “Robust Interval Search,” proving soundness and convergence, and provide complexity results (polynomial for non-sticky, up to exponential for sticky) plus experiments scaling to POMDPs with tens of thousands of states and robotics/operations-research case studies.

Related Articles

Underwhelming or underrated? DeepSeek V4 shows “impressive” gains

SCMP Tech

Debugging AI Agents in Production: ADK+Gemini Cloud Assist | Google Cloud NEXT '26

Dev.to

🤖 Learn Harness Engineering by Building a Mini Openclaw 🦞

Dev.to

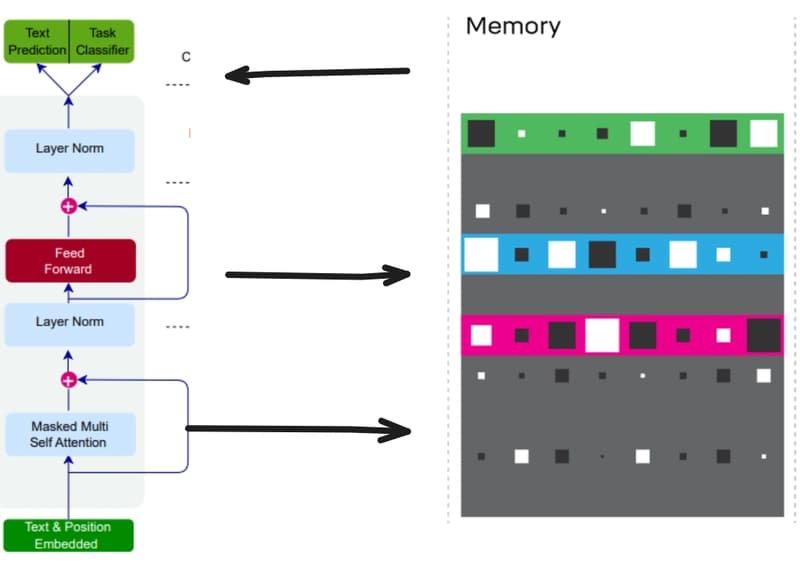

Teaching Small Language Models to Remember: Giving LLMs a Notebook with Differentiable Neural Computers

Dev.to

![Training LFM-2.5-350M on Reddit post summarization with GRPO on my 3x Mac Minis — final evals and t-test evals are here [P]](/_next/image?url=https%3A%2F%2Fpreview.redd.it%2Fzynqkm0osaxg1.png%3Fwidth%3D140%26height%3D76%26auto%3Dwebp%26s%3De827ef782e46b56a11f263b7689811da72904ba9&w=3840&q=75)

Training LFM-2.5-350M on Reddit post summarization with GRPO on my 3x Mac Minis — final evals and t-test evals are here [P]

Reddit r/MachineLearning