Ken Liu on AI and Freedom

this show was such a treat

Ken Liu graces ChinaTalk with his presence. He is the author of the Dandelion Dynasty silkpunk fantasy series and a brilliant short fiction writer — one of his stories was recently adapted into Sam Altman’s favorite show, Pantheon. We all know his translation work on the first and third volumes of the Three-Body Problem trilogy, but even better was his absolutely brilliant translation and commentary of the Dao De Jing. As much as I hoped that project would get him fully on the classical Chinese translation train, he followed it up with a very different direction — a techno-AI thriller, All That We See or Seem, released late last year. Irene Zhang of ChinaTalk joins us to co-host.

In a wide-ranging conversation, Ken Liu argues that:

Technology is the most human thing we do — humans have always externalized our minds into the world and then allowed those creations to reshape who we are.

AI “slop” won’t stop humans from making art that matters, and the real distinction isn’t quality versus slop, but between desire-fulfilling machines and artists who draw from the collective unconscious.

The deeper danger of AI isn’t machines replacing humans, but systems that train humans to behave like machines.

Science fiction isn’t prophecy, but mythology — and ideologies are just mythology’s cheaper, hack cousins. Orwell, Shelley, Tolkien, and Le Guin endure not because they predicted the future, but because they gave us metaphors powerful enough to think with across generations.

Large language models are intelligent, but can’t be wise. Drawing on Laozi and Zhuangzi, Ken explains why everything that truly matters lies beyond language.

Listen now on your favorite podcast app.

Technology as Human Expression

Jordan Schneider: We’re living in the age of Claude Code, and I want to start with a passage you wrote. Why don’t you set it up and read this vision of future coding and writing?

Ken Liu: Let me start by saying what the book is actually about. All That We See or Seem is a techno-thriller in the sense that none of the technology mentioned is really speculative — it’s all either already here or very possible, just needing to be scaled up slightly.

Julia Z is a hacker, a hero in the mold of Clarice Starling or Jane Whitefield — someone with a very strong moral compass and a very dark past. She’s trying to escape that past, but events keep pulling her back in, and she realizes she cannot overcome external threats unless she confronts the demons within her. In this novel, she has specialized skills with AI and robotics and is tasked with finding an artist who has disappeared — an artist who works with AI to help large audiences dream together.

The passages I’m going to read are reflections on Julia in the Age of AI. Here’s the first, which is about what it’s like to be a programmer — something very close to my heart:

The hardest part had been the programming. Writing code without the help of Talos, or even a lowly codemonkey or datajinn, was not something Julia had much experience with. In the same way that few contemporary writers could compose even a five-hundred-word essay without the help of AI as research assistant, fact-checker, dictionary, thesaurus, grammarian, and, in extreme cases, amanuensis, very few contemporary programmers could create a functioning nontrivial application without the help of codedaemons, bug-genies, patchsprites, scriptpixies, and a whole fairyland of similar artificial intelligences.

Homo sapiens had always externalized their minds into the world, oozing books, drawings, plans, recordings, the same way honeybees made their minds visible in the form of wax comb and sweet honey, but the trend had never gone as far as now, when most of one’s knowledge consisted of knowing where to look things up and how to give an AI the best prompts, and more of one’s mind existed outside the skull, infused into fiscjinns and memoelves and egolets, spread among artificial assistants and helpers and aide-mémoire, imprinted in cogitrons and electrons and logons, than remained inside the squishy gray matter inside the skull.

Jordan Schneider: Let’s start with the idea of choosing a techno-thriller as a genre to explore something every white-collar worker is grappling with today.

Ken Liu: Genre labels are largely irrelevant to me. All of my fiction — whatever the marketing genre — is fundamentally technological. Whether it’s the Dandelion Dynasty, my short fiction, or the Julia Z series, they’re all stories about what it means for humans to express parts of themselves through technology.

If there’s something unique about humans compared to other species, it’s fundamentally our technological nature. This is important. A lot of things described as “sci-fi” aren’t really sci-fi at all — they have very little to do with science. They’re technological stories. “Techfi” is far more interesting to me. Technology and science are completely different disciplines, and the vast majority of so-called sci-fi is really techfi, because it’s really about what it means for humans to express themselves via their creations.

We are the only species who express who we are through the things we make. We imagine things that did not exist in the universe, then actually bring them into being — concretely substantiating our mental constructs in the world. And these technological manifestations, this stuff we ooze out, in turn changes who we are. We converse with, interact with, and co-evolve with our own creations. No other species does this.

One of the great philosophical debates in our tradition is whether humans are more human without technology or with it. This debate goes back to Plato, to Zhuangzi, to all the great philosophers. What is language? The entire skeptical interrogation of language itself is really this debate about human nature.

In the contemporary world, we often default to the position that technology is somehow external to who we are — something we should be wary of. To me, this is nonsensical. Human technology is a manifestation of human nature. It’s in fact the most human thing we make. You cannot understand human nature without understanding human technology — it’s literally a tangible substantiation of what is inside our minds. To understand what human nature is, we have to interrogate human technology and truly understand how we co-evolve with our own creations. That’s what the Julia Z series is really about.

Irene Zhang: You use the metaphor of the jinn to describe what the marketing world might call AI agents. That obviously comes from Arabic mythology and Islam. Why did you choose that metaphor, and how do you think about the metaphors we use to understand AI?

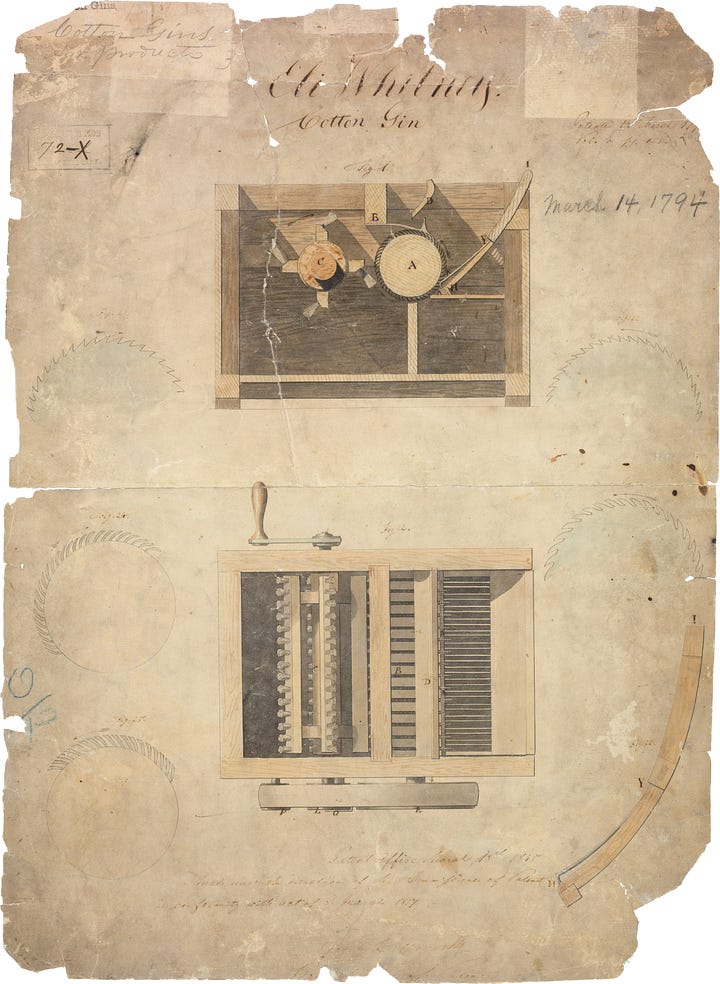

Ken Liu: The immediate answer is that I was interested in the word “cotton gin” — it’s short for “cotton engine,” which is just the way we play with language. Why not take that “gin” and turn it into a different kind of “jinn”?

If you look at how technology is expressed through language, it’s very mythological. Think about how we name our technology. Why did the U.S. decide to name its space programs after Greek and Roman gods? There’s a mythological component to the way technology is manifested because technology is not independent of who we are — technology is how we dream.

The reason technology is so expressive of human nature is that it’s a manifestation of our deepest desires and dreams. We’ve always used mythology to express and understand technology. Look at how technology companies talk about and market their creations — there’s always a mythological component. If I didn’t name them jinns, that would be weird. It has to be a mythological name, because that’s how these companies think.

Jordan Schneider: Is this time different? You made the argument to the negative, but in that passage, you’re saying that externalizing your brain to the extent your characters do — or we’re doing today — is something unique in human history.

Ken Liu: Externalizing the brain into our creations is not unique. Every child who has learned to read has experienced that moment of communing with mental patterns from creatures long ago. When you read Plato’s dialogues or Zhuangzi’s stories today, you’re communing with minds from thousands of years ago. That’s very strange if you actually think about it — you’re engaging in something no other creature can do. We’re communing with mental constructs from the past.

Consider what happens when you do arithmetic — long division, an integral, working out a tensor. You’re using pen and paper to externalize your brain. Your cognitive function is literally externalized on the paper. It’s a very strange thing. Your brain is out there, you’re interacting with it using your body, then getting it back. No other creature does this.

Is AI significantly different from that? I don’t think so. The best way to understand large language models is to go back and read the structuralists from the 1980s. Roland Barthes said that in a deeply literary society, burdened or blessed with millennia of writing and millions upon millions of authors, we are surrounded by words — by their minds. A modern writer, a “scriptor,” is not an author who creates out of nothing, but someone basically babbling in the presence of a complete corpus of past writings. You are just playing with words, reference upon reference, allusion to more reference. You’re acting as a channel, a conduit to this playful field of past writing as you babble more writings.

Barthes wrote this as a way of talking about the death of the author, but reading it now in the age of large language models, you realize that’s exactly what he was describing. The large language model is a substantiation of that imagined dictionary of all writings. It’s language coming to life. It’s you interrogating the entire corpus of what humans have written — this “pluribus,” this multi-mind, that you’re engaged with.

That’s my argument for how AI is not really different from how we’ve always dealt with technology.

Now, there are some interesting differences. For the first time in history, we’re confronted with the idea that intelligence and consciousness are not the same thing.

If you examine older sci-fi literature, there’s a huge fundamental assumption that something intelligent will necessarily be conscious — that the more intelligent something is, the more it necessarily comes with intention, will, desire, and the sense of being something, of some mind behind the intelligent acts.

What we’re seeing now is that there’s no doubt these models are intelligent. A lot of the popular discourse — “it’s just a very powerful autocomplete” — is very silly. That description is technically true, but it means nothing. You might as well say humans are nothing more than compilations of statistical likelihoods. Yes, that’s technically true, but so what?

The real issue is this — if something can write essays, pass the bar exam, and get a perfect score on the SATs, to say it’s not intelligent is a nonsensical declaration. It’s clearly intelligent, but it’s not conscious. I don’t think many of us would argue that LLMs are conscious.

That is very strange. The fact that we can have intelligence completely divorced from consciousness, from will, from intention, from subjectivity — that is weird. We’re still coming to terms with it. We’re trying to understand why we value subjectivity so much, yet don’t seem to think intelligence by itself is all that valuable anymore. Many of us now seem to be leaning in that direction.

That’s honestly why a show like Pluribus on Apple TV is so interesting — it’s mythologically engaged with this particular question: what matters more, subjectivity or intelligence?

The Age of Slop

Jordan Schneider: One theme you pick up on is this idea that yes, there’s a future of AI slop that your world is swimming in, but there’s still something where the audience wants to meet up in person and have a connection to a particular human who lives and breathes and bleeds. It seems your contention is that there’s something about having a human behind it all that will remain fundamentally appealing, however good these models get.

Ken Liu: I want to start by saying I don’t necessarily have a specific argument one way or the other in the book. My fiction gets published and people attribute certain points of view to it — sometimes, readers attribute polar opposite views. That’s actually a sign I’ve succeeded, because I deliberately write fiction with very little messaging in the sense of propaganda. Fiction written as propaganda isn’t particularly interesting to me. Ayn Rand very famously writes propaganda that is very popular, but I don’t find that kind of fiction interesting. All of my fiction is aesthetic works that can deliberately be read to support multiple contentions, because that’s how reality is. You can take reality and interpret it to suit different messages.

That said, the contemporary anxiety over AI slop is understandable, but it has to be contextualized historically. We are already living in a world of slop — not AI-generated, but mass-produced slop.

Take your mind back to before the invention of photography. You might see maybe a few hundred images in your entire life, every single one produced by hand by a real human being. Church stained glass windows. Famous paintings, if you were rich enough to travel. A few pictures you made yourself if you learned to draw. Pictures drawn by friends. Prints in books made by someone who had to translate an image into a printing plate by hand. A few hundred of these things in your lifetime.

After photography and photographic reproduction techniques, we entered what Walter Benjamin called the age of mechanical reproduction. We’re surrounded by images — hundreds of thousands in a single day. The vast majority is slop — clip art, images made by graphics programs, and reproductions from public domain stuff with a few manipulations.

My point is that this hasn’t destroyed art. It hasn’t made humans unable to appreciate art. In the age of AI slop, what makes you think we’ll stop producing actual art? We’re already living in an age of slop, utterly surrounded by it, and yet the proliferation of slop has allowed us to become even more artistically interesting and create more interesting human art. I don’t see that being different in the future. We know how to deal with slop. The age of mechanical reproduction is here, and the age of AI slop will not be any different. I don’t see the moral panic over it.

Now, that’s not the same as saying it won’t lead to the loss of livelihoods. The age of mechanical reproduction caused the loss of livelihoods for many artists — specifically engravers, great artists who had to translate paintings and drawings into printing plates. Yes, they were displaced, and that was a difficult transition. We will face a difficult transition today too. But the idea that AI slop will destroy art is very flawed. That’s just not how historically any of this has ever worked.

What interests me more is what this technology can enable humans to do creatively. Historically, in every case where some technology displaced aspects of human craft, humans ultimately learned to practice craft with that technology.

Humans have practiced craft with the camera. When the camera was just “push a button and chemistry and physics make a picture,” that’s not interesting. But when humans learned to use the camera as an artistic tool — how to tell stories with it — that’s how we ended up with cinema, with TikTok, with YouTube, with the vast explosion in video art. None of which would have been possible without the camera.

Something similar has to happen with AI. Today’s AI is in the stage where you give it a prompt and it generates something. This is very non-crafty — there’s no craft to it. But it won’t stay like this. Over time, artists will figure out — what are the affordances we need to actually use these models in interesting ways? How do you precisely position the generator within latent space? How do you precisely delineate the chain of inferences and associations inside the model’s weights to generate what you want? How do you precisely manipulate this model the way you can dial in camera settings, set up poses, and frame a shot?

When all these affordances are given to an artist who wants to work with AI as a tool, then and only then will we see interesting art being generated by humans. That’s my contention.

Jordan Schneider: In a recent Substack post, you said you spent much of December and January playing video games. Behind you, I see a PSP and a Game Boy Advance. In the book, you explored one future of artistic creativity — AI-enabled dream weaving. Where do you see the future of video games with all of this?

Ken Liu: One of the most contentious uses is AI-generated assets in games. I personally think this will eventually be normalized. If you want to call AI-generated material slop, that’s what it is — but we’re surrounded by slop, surrounded by mechanical reproduction and cheap art. That’s just how it is. Eventually, this will probably happen to video games too, in terms of asset generation.

That doesn’t mean human-crafted material will lose its appeal. In the same way that humans, even in the age of mechanical reproduction, continue to be enthralled by the aura of the artist — much to perhaps Walter Benjamin’s disappointment — I don’t see that changing in the age of AI slop either. The human aura will still be very appealing to many of us.

At the same time, one of the great things about AI-generated art, like mechanical reproduction, is democratization and the ability to generate certain kinds of art that human artists would never make.

Here’s a very interesting pattern — humans find playing with AI to make art for themselves very interesting, but we almost never find sharing this stuff with other people interesting, and other people don’t find it interesting either. You generate something using AI and it’s kind of interesting to you, but not necessarily to anyone else. There’s an intense personalization effect here worth following up on.

AI is really good at fulfilling your desires in a way that human artists never will and never can. Take a crude example. You might crave a particular kind of fiction or film — an adaptation of your favorite novel starring your favorite actors. In reality, that will never happen. Humans will not do that for you. But you can use AI to create it. AI is a desire-fulfilling machine, but it’s only able to do that for you, and only you would find it interesting. It’s not the kind of thing human artists would ever do.

The analogy is that mechanical reproductions can fulfill a niche humans never could or would. For the vast majority of history, it wasn’t possible for most people to get a good portrait done. You had to be very rich or famous, otherwise you relied on a friend or family member who could draw. That’s why we have that picture of Jane Austen done by her sister — it’s not a very good picture, but it’s the only one we have. Once the camera came along, middle-class families could have pictures done cheaply. Now everybody can take a selfie. We’re awash in slop selfies.

That’s what technology can do — allow you to get things humans never would provide. You can’t get portrait artists to paint most of us, but you can easily use a camera. If you want a particular kind of story, you’re not going to get human artists to write it for you. But you can get a machine to do it. This highly personalized, self-involved fiction — when people speak about AI boyfriends and companions, that’s what they’re talking about. Fiction co-written with an AI for themselves alone. That’s exactly why these things are appealing.

But that doesn’t mean people who love this will stop appreciating fiction written by humans that’s not meant to fulfill desires. Artists are not there to fulfill your desires. Artists are there to fulfill their own dreams. They go into the collective unconscious and are seized by some image or vision they have to bring out. That’s why artists create.

There’s a complementary role for AI versus human artists. Human artists will do what they’ve always done: dream and bring forth interesting dreams from the collective unconscious. AI will fulfill your individual desires. The two are complementary — not the same kind of thing, but they can coexist.

Everything Not Said

Irene Zhang: On companionship and desires, I wanted to ask about Talos, Julia’s AI assistant. Julia lives in a world where personal AIs are common, but you don’t portray that as companionship in the book. People still fall in love and have friends and family. How did you make those decisions in crafting Talos and the personal AI landscape?

Ken Liu: Talos is actually very different from any other personal AI in the book, and the distinction is important. The personal AIs that everybody else uses are essentially subscription services — what all the companies are trying to make. You subscribe to their cloud AI, it’s personalized to you, but the data is all with them. That’s what people are concerned about in terms of privacy.

Talos is different. Talos is not a subscription AI from some large company. Talos is something Julia builds herself, running on her own local hardware, entirely controlled by herself. What Talos really is, in terms of how the book describes it, is an “egolet.”

What’s an egolet? It’s an AI representation of you. Let me tease this apart.

What I find deeply interesting about AI is that neural networks are essentially a camera for different things — not a camera for images, but a camera for decisions. For decision-making procedures, decision-making processes, for choices you’ve made in the past.

Take a concrete example — if a painter were to train an egolet (and companies are exploring this possibility), they would train a neural network not just on their finished paintings but on the entire process of creation. How do you decide to make this paint stroke and not that? How do you decide to cover up these strokes and not those? How do you decide to do this part first and that part last? The entire process of creating a painting or a book is where the interesting stuff is.

We’ve all had the experience where AI produces a painting “in the style of so-and-so,” and it looks superficially good until you examine it — there’s always a superficiality. Or there’s this popular application where you feed all the books by some author into a model and say, “Now you can talk to so-and-so.” You train an AI on all the dialogues and books by Plato and supposedly you can talk to Socrates about AI.

These are all terrible apps, and none of them ever feels convincing. People have done this to me — trained models on my interviews and asked me what I think. What I think is, “This is garbage. This sounds nothing like me.”

Here’s why — for everything I say, there are ten things I’ve decided not to say. If models are trained only on things I’ve published, the model will never know all the things I would never say. When you have models trained only on what has been said, they don’t know what has been decided to be not said. So they always generate garbage, saying things I never would have said.

The issue is that for these models to be a good representation of the person, they need insight into all the things you’ve decided not to say — everything behind the scenes. Published works and finished paintings are like the part of the iceberg above the water. The vast majority is below. Steve Jobs once said something like — this is a paraphrase — for everything you say yes to, there have to be at least ten things you say no to. It’s the part you say no to that matters.

An egolet, in my conception, is an AI capable of actually capturing the part where you say no — all the parts you’ve denied.

How many of us are comfortable giving that information to Anthropic, to Google, to OpenAI? The idea that you would reveal the parts you’ve kept hidden from the public — who’s going to do that? Nobody.

That’s why personal assistants in that form will never amount to anything. Personal assistants trained only on what you’ve let out will never amount to anything. The only way to produce real egolets — small egos, small copies of yourself, something trained on who you truly are — is if you have total control over the model. Total control of the training, total control of the hardware, total control of the data. Total sovereignty.

That’s what Talos is. Talos is totally controlled by Julia. Because she has complete control over Talos, Talos is very different for her. She explains in the book that talking to Talos is like talking to a version of herself, or different versions of herself in different periods of her life. She’s able to examine herself. Talos is the fulfillment of that oldest of philosophical desires — “know thyself.” By having an AI trained on yourself in this deep sense, you can reflect on yourself. Julia can examine who she is via Talos — to leverage herself, work with herself, and critique herself. That’s what makes this sort of thing actually interesting.

The Real Danger of AI

Irene Zhang: Without spoiling it, quite a bit of the plot centers on something that actually exists — scam call centers and human trafficking rings in the Golden Triangle, primarily in the Thailand-Burma border regions. How did you become interested in that, and what makes it important to you?

Ken Liu: Let me address that by explaining what I think the real danger of AI-generated slop actually is. I disagree with a lot of mainstream commentary on what the issue with deepfakes really is.

A lot of commentary focuses on the idea that we’re going to be manipulated by bots from foreign actors. The natural outcome is better ways of distinguishing organic accounts from bot-operated ones. But if we get there, the next logical step for actors who wish to weaponize commentary is to have humans do it, not bots. In an age where machine-generated slop is a big problem, there will necessarily be a premium placed on human-generated content. The next logical step is actors who enslave human content creators for that purpose. This seems quite plausible, and I’m sure it’s already being done somewhere.

But the issue is not quite that simple. It’s a fundamental misunderstanding of what the real problem of AI is. We often describe the problem as machines replacing humans, as though that’s the biggest issue. That is not the real danger. The real danger from AI is that humans will start treating other humans as machines. It’s the gradual mechanization and reduction of humans into components of a machine — that is the relentless pattern of modernity.

This has been going on forever. When the assembly line was invented, human workers were reduced to components of a massive production machine. Instead of exercising individual judgment and creativity, humans were put into positions where they exercise as little creativity as possible — repeating the same motions, specializing in doing the exact same thing over and over with as little variation as possible, becoming standardized components of a machine.

That production line model has persisted into the modern age. We constantly take away individual initiative and decision-making from workers. Call center employees are instructed to follow the script, not deviate, not exercise human empathy — to think of themselves as components of a machine, essentially language models. This is why call center workers are so easily replaced by AI: modernity has tried to reduce humans into robots so that real robots can take over from them very easily.

This is the real danger. Wherever humans retreat into an area of individual initiative and choice, the pressure of capitalism is again and again to reduce them to components of a machine, to appropriate their creativity, to standardize their initiative for purposes of money and control and power.

In the book, without spoiling it, a large part involves exactly this kind of enslavement of humans into an economy that puts a premium on individual human creation. In the age of mass mechanical reproduction, human-made custom bespoke art is given a premium. In a future where AI-generated slop is everywhere, human-created content will again be given premium value. Social media companies will figure out ways to show they have real engagement instead of bots. When you have an internet that’s 99% bots talking to bots, the way to convince humans to engage is to promise them real humans.

But once you’ve gone down that route of putting a premium on human content, people will inevitably figure out ways to again reduce humans to machines and enslave humans for that purpose. This is the pattern we see over and over again.

Mythology vs. Ideology

Jordan Schneider: These books — sci-fi in general — are not predictions. They’re an expression of where we are today. Why is the idea that these books are predictions so seductive, and why does it make no sense?

Ken Liu: There’s a tendency in literature and the arts to figure out how we justify ourselves. Fundamentally, writers write because they’re having fun. The fact that we’re being paid for it is a little weird, so we have to figure out why. A common reaction is to view sci-fi as particularly relevant because it somehow predicts the future or helps us think about what’s likely, or warns us from dystopias we might step into.

I don’t think this justification is plausible or even interesting, because sci-fi has a very bad track record of predicting anything. If sci-fi ever does predict anything, it’s more out of luck than anything else. The sci-fi we hold up as really good predictors or evergreen classics are such because they get some metaphor right that’s very potent, but the details are completely wrong.

Take 1984. It’s a very good book and still extremely relevant decades after it was written. But the surveillance society we live in today is very different from the one envisioned. Big Brother in 1984 is a state-imposed surveillance system. That’s not the surveillance system we have today. Even in contemporary totalitarian societies, surveillance is often not imposed in the way 1984 pictures it.

We live in a surveillance society that we crafted out of our own desire. It’s not a state-imposed system — it’s a system we constructed through voluntary consumer decisions over decades. We consistently gave up bits of privacy in exchange for convenience. Now we’re surrounded by devices constantly listening and watching, sending bits of what we’re saying back to the mothership. So much of our data is given to companies to train their devices, and these companies are happy to share it with governments. We are under a degree of surveillance Orwell would have found astonishing. And the vast majority of us are quite happy about it. We don’t think this is terrible. We’re fine with having our data constantly exposed.

Orwell did not get any of the details right. But the fundamental metaphor of Big Brother is extremely potent as a mythological concept. It has shaped how we think about surveillance, how we talk about it, and how we think about private desires and private thoughts versus being constantly on display.

That’s what sci-fi is actually good at. Sci-fi is not about prediction — science fiction writers have no more authority or knowledge about the future than anybody else. The future is very accidental. Every time science fiction writers speculate about the future, they can’t help but extrapolate from present trends. Science fiction stories are almost always about the present — present trends extrapolated. But the way the future evolves depends on so many unpredictable factors. The future we end up having is almost never the future we thought we would have. You can plan all you want, but the future you get will be nothing like what you planned. A thousand different teams will work on solving the same problem, and the team that ultimately succeeds won’t be the one many of us thought would succeed. The future is unpredictable in a very deep, fundamental sense.

But sci-fi writers do have something interesting and valuable to add in the mythological realm. Artists go into the collective unconscious, dream interesting visions, and bring them back. It’s these mythological visions that ultimately persist.

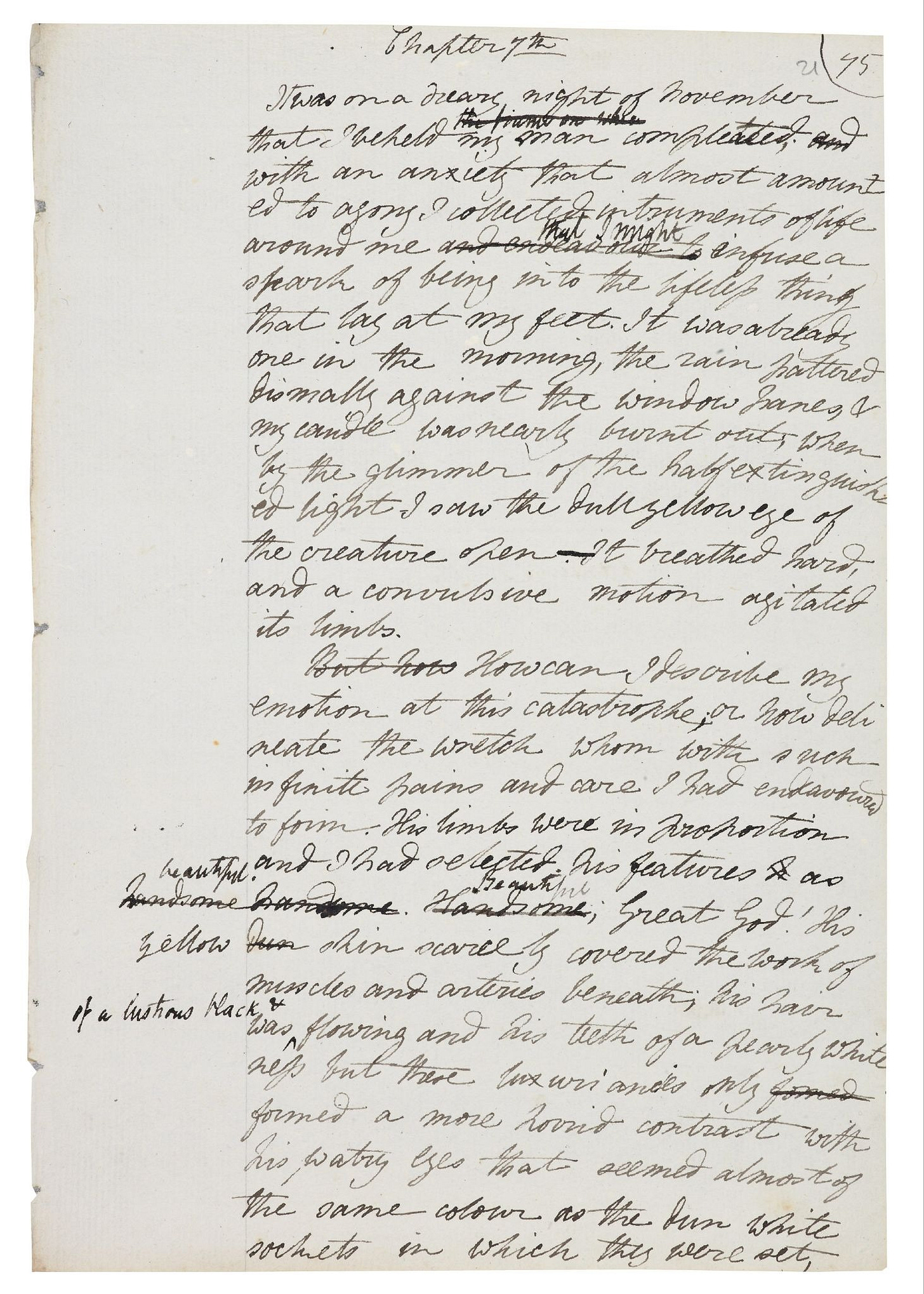

We don’t read Frankenstein anymore for its speculation on how you might create artificial life. We read it because the creature is a very potent metaphor for new technology. We cannot think about new technology without thinking about Frankenstein’s creature.

In fact, the LLM — this technology of the moment — is very much like the creature. If you go back and read Frankenstein, read the part about how the creature learns human language, learns human morality, learns human relationships, learns to desire — it’s eerily like the way LLMs are trained. And the questions being asked of the creature are very much like the questions Anthropic nowadays is asking about alignment — how do we end up with an AI aligned with our own interests? I find that deeply fascinating.

This is why old sci-fi remains relevant. Not because their predictions are particularly valuable, but because the metaphors they bring up, the mythological figures they invoke from the collective unconscious — they persist and help us dream about the present and the future, and think about how we want to use technology to express who we are.

Irene Zhang: While we’re discussing sci-fi writers as myth-makers, I can’t help but read this in the American context today. Palantir exists, and I’m sure Tolkien, when he wrote The Lord of the Rings, did not imagine his myth-making would become a potent symbol enabling technology as a political class aligned with certain ideologies and bound to the government. How do you think about that evolution in sci-fi’s relationship to politics in America today?

Ken Liu: I don’t think writers should be propagandists either way. The reason Tolkien is potent as a writer is that he tried his best not to be a propagandist. The fact that The Lord of the Rings can be read to support completely different political ideologies is a testament to his skill, not a failure. He might personally disagree with how Palantir is now invoked as a symbol, but that’s not a testament to his failure as a writer. He succeeded in creating very potent mythology. Good mythologies will always be appropriated by people of very different beliefs. Just watch how Christianity or Islam has been appropriated by very different ideologies to say completely opposite things.

I don’t think writers should feel responsible for how their mythology is used. The writer’s only job is to create interesting mythologies — mythologies true to the collective unconscious, to their journey into it, to the dreams they’re trying to bring forth. That is their only job.

They should help us escape in the deepest sense. The real world is filled with bad mythologies, bad allegories, and bad fantasies that are not true to human nature. One of the critiques of fantasy that Tolkien and Ursula K. Le Guin both pushed back against is that fantasy is escapist. This is obviously nonsense. As Le Guin said: “If we live in a prison, then escape is actually our moral duty.”

In the world of ideologies that we live in, ideologies are the bad cousins of mythologies. Ideologies are cheap, bad, hack versions of mythologies. The fact that people can believe in ideologies at all is a sad state of affairs. The idea that you believe money has actual meaning, the idea you believe that the Wall Street Journal has any kind of moral authority — that’s nonsense. If that’s the reality you’re living in, then it is your duty to escape.

That’s what fantasy does. Fantasy enacts our moral duty to escape from the bad hack mythology of ideologies by substituting them with real mythologies — mythologies that actually mean something. The fact that somebody can reduce Palantir to the service of a bad ideological agenda does not make the actual myth in The Lord of the Rings any less valuable. It’s up to the rest of us to recover the multitude of meanings from the mythology and reclaim the truth that fantasy is meant to tell.

Jordan Schneider: Ideology as hack mythology — nationalism, for instance. There are a lot of people all around the world who get into positions of power on the backs of those things.

Ken Liu: I entirely agree. One of the worst things that’s happened to politics — not just democratic politics, but politics everywhere — is that real mythologies are being hijacked by ideologues. Real mythology that is life-giving, potent, creative, and inspiring has been hijacked into serving very hacked, bad versions of the real mythologies. Nationalism is often one of them. Real, genuine, powerful collective identities have been hijacked by nationalistic sentiments into something horrific — in the same way that the beautiful vision of Christ has often been hijacked by organized religion into something much worse.

Daoism and Freedom

Jordan Schneider: Let’s take it to our beautiful vision of Laozi, who just kind of gets ignored — not really hijacked. Why did you take this one on?

Ken Liu: Laozi actually does often get hijacked, in ways that are pretty horrible. Daoism is one of those philosophies that often ends up twisted into serving something it’s not. People quote Laozi whenever they’ve been thwarted in their political ambitions, using him for comfort. Or they use Laozi to discourage resistance — to say all resistance is pointless and you should just go with the flow and do whatever the dominant trend is. These are utter misinterpretations, sometimes misunderstandings, sometimes deliberate twistings — in the same way Palantir is a deliberate twist on what Tolkien was trying to do.

Laozi is interesting to me because he casts a particularly strong shadow across East Asian philosophy in a way that’s rarely acknowledged. People often say Western culture is deeply individualistic while Sinitic culture is deeply collectivist. This is utter nonsense if you know anything about anything. Western culture has very strong communitarian and collectivist trends — arguably the entirety of Christianity is deeply oriented towards a collectivist vision of what human beings can do and be. You cannot deny that Christianity is a deep part of Western culture.

Similarly, you cannot deal with East Asian culture without addressing Daoism’s deep influence, especially through Zen Buddhism, which is basically a fusion of nativist Daoist philosophies with Buddhist ideas. Understanding the deeply individualistic and freedom-oriented nature of Daoism is extremely important to me.

One of the things I care about most in Daoism is its deep commitment to freedom as an ideal, in a way that’s rarely discussed. There is a deep wellspring of freedom — yearning for freedom, love for freedom, mythologizing of freedom — that is important to Daoism. We need to recover, rediscover, and reclaim these ideas. They’re important now, perhaps more than ever.

Jordan Schneider: Care to elaborate?

Ken Liu: One thing about Daoism that often gets ignored is this idea of freedom — freedom in a very deep sense. What does it mean to be one with the Dao, to follow the Dao? It actually means a kind of transcendence, particularly important in the modern age.

A lot of times we feel a lack of freedom not because of external constraints but because we fall into the trap of believing there are certain things we need or should do that are actually not things we need or should do at all.

Think about — those who are a little older — how important it was when you were a teenager to dress the right way, listen to the right music, express opinions your peers did. Looking back, all of that seems incredibly silly. Yet at the time, it seemed like the most important thing in the world. Those were constraints on your freedom, on your ability to be who you were. It’s only with hindsight and wisdom that you realize that.

The older you are, the less constrained you feel. The less you feel you have to keep toxic people in your life. The less you feel you have to play a role and be nice to people you don’t want to be nice to. The less you feel supposed to do things other people tell you to do.

The older you are, the closer you are to death, the freer you are. That’s paradoxical. We ought to think young people have the most freedom because they have the most choices, and old people the least because they have fewer choices. Yet psychologically, older people feel freer because they have less to give.

That’s one of those paradoxes about Daoism that’s important to think about. The way you are free is the degree to which you are not constrained. The more you feel free to live the way the universe wants us to live, the closer you are to the Dao.

That’s one of the insights I got reading the Dao De Jing in the aftermath of the pandemic. Until I started reading the text in depth and really reflecting on it, I hadn’t realized how much academic discussions of Daoism neglect how radical the philosophy really is. Daoism refuses to be tamed. It’s not one of those philosophies that can be easily reduced to larger frameworks of philosophical traditions. It’s incredibly skeptical, slippery, and self-deconstructing from the start.

But ultimately, Daoism’s highest ideal is freedom. In an age with so many constraints and impediments to freedom, that makes Daoism more relevant than ever.

Irene Zhang: There’s a natural follow-up: how would Daoism feel about surveillance and data collection as a constraint on freedom?

Ken Liu: I cannot imagine Laozi or Zhuangzi or any of the Daoist philosophers looking at the world we live in and viewing it as anything but the worst of the worst. We are literally surrounded by illusions and spend our time chasing after illusions.

Think about what you’re doing on social media. You’re getting your emotions riled up by words generated possibly by a bot or by someone paid to manipulate you. Your very anger, your very rage, is what these companies monetize. In the moment these companies claim to give you agentic AI, you have actually been turned into an agent of the companies themselves. The only reason agentic AI is being given to you — so you can give them your email and calendars and let the AI do things for you — is so you can give them more data they would otherwise never have access to. You are the agent being deployed to explore the world and give these AI companies more and more information.

We live in a world surrounded by illusions, pursuing illusions. We think we have wisdom when we have none. We are so obsessed with chasing illusions that we’ve utterly forgotten what the real pursuits are. I could say endless things about our politics and how we waste energy chasing illusions and fighting over illusions rather than going back to the few things that actually matter.

As Laozi put it, we are obsessed with our eyes and neglect our bellies. It is the belly that is the fundament, the belly that is the truth, the belly that allows us to feel the Dao and be with it. Our eyes are surrounded by illusions. We are constantly pulled away in this age of slop — not just AI-generated slop, but slop ideologies, hacked mythologies that lead us away from where we need to be.

I don’t think there’s a magical solution other than for individuals to go back and make the right choices. This is very difficult. For most of us, the folly of our youth is not realized until decades later. Maybe society as a whole has to go through this — a few years, hopefully not decades, of this kind of folly before we recover some measure of wisdom and realize how deeply we’ve gone down. Meanwhile, we can only do the best we can as individuals to make choices that allow us to focus on our bellies and not be deceived by our eyes.

The Inadequacy of Language

Jordan Schneider: Can you talk about how Laozi used language? Rereading it this year, I was struck by how different he feels from what ChatGPT and Claude give you.

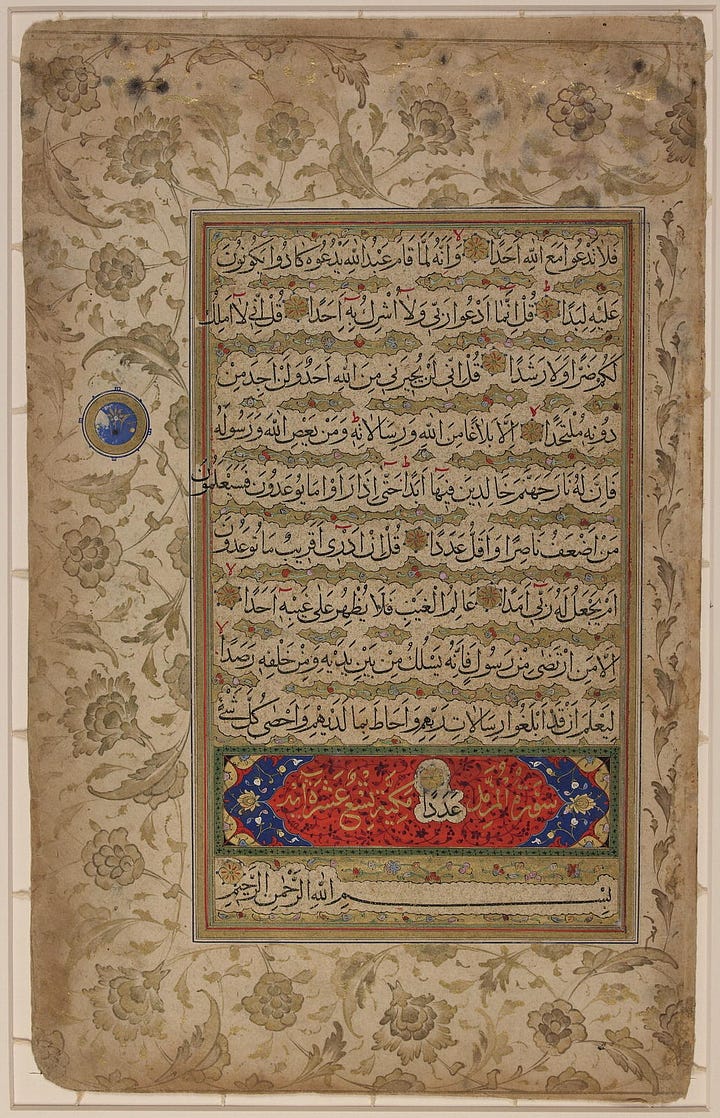

Ken Liu: That’s a great point. As a premise, every single writer worth reading essentially invents his or her own language. I don’t think it makes sense to say Jane Austen wrote in some 18th-19th century English. No — Jane Austen wrote in her own language. She had to invent her own language to tell the story she wanted to tell. Same with Shakespeare. Same with Laozi.

Laozi took classical Chinese — a very interesting language in its grammatical structure and deep commitment to balanced structure in literary creations — and turned it into something unique. As a writer, he persisted in writing in a way that deconstructed binary opposition.

Binary opposition is a deep part of the human cognitive apparatus, a deep part of how we see the world. Something is either this or that, black or white. Laozi leaned into it. If you read him, he constantly writes things in a way that turns every word into its own opposite. He uses the same word to mean its exact opposite.

But the purpose isn’t to say everything’s just a big mush. He’s saying that in every binary opposition, there’s a third possibility — or innumerable third possibilities — that are neglected. Things are not either black or white, but other colors entirely. Things are not empty or filled, but potential, which is not the same as filled and not the same as empty.

Over and over, he makes statements that are “this or that,” “this and that,” “this is that.” He constantly uses the same verbal formulation to force you to see that language itself is inadequate to the expression of actual truth.

The way that can be stated is not the way. The path that can be laid out is not the path. This sounds like paradox or mystical nonsense until you apply it to your own experience.

A concrete example — as a writer, when I started out, I thought there would be some path to success. It took years and years of failing before I realized there is actually no path. There’s only the path left behind you after you’ve done what you’ve done, after you’ve lived. If you ask other people how they succeeded, they’ll tell you what happened to them — but that’s unique to them. You cannot apply it to yourself in any way that matters. You have to find your own path, your own flow through the universe, the path that will lead you to the sense of freedom you crave. Because writers, after all, crave freedom.

The path that can be stated, explained, and reduced to language is not the path that matters. This skepticism toward symbolic language runs deep throughout Daoism — the idea that whatever can be captured in words is not the actual thing itself. If you’re obsessed with words, you’re only obsessed with shadows of real wisdom. Language itself is the thing that’s left behind when real wisdom has moved on.

Zhuangzi has this beautiful parable — if you’re reading the words of sages, you are not truly engaged with the wisdom of sages, because the real wisdom has left. All you’re left with are the footprints of the mystical beast, the echo of the dragon’s sound, the husk of the real grain of wisdom. What you’re left with is the shell that will point you to the real thing. But to find the real thing, you have to look beyond language.

This skepticism of language exists throughout philosophical traditions. But to bring it back to your question, Jordan, this is exactly why large language models do not have wisdom. They may have intelligence, but they don’t have wisdom.

All that large language models can ever do is know the world to the extent they can know it through language. But everything that matters is beyond language. The truth about the universe is not capturable by language. Language is itself not adequate to capture reality. Language is a shadow cast by reality, a manifestation of human mental impressions left by reality. Reasoning from these traces and tracks, you’re always just reconstructing the beast, the dragon that left them behind. You’re not actually seeing the dragon itself.

Laozi urges you over and over again to seek the dragon itself, not merely contemplate its tracks and scales.

Jordan Schneider: So when’s the Zhuangzi translation coming out?

Ken Liu: Not working on one.

Jordan Schneider: Okay, maybe next time.

Irene Zhang: One last controversial question — why be a writer if words are about illusions?

Ken Liu: That’s actually a great question. Le Guin had a good answer — artists are about the truth, not facts. Artists go into the collective unconscious and retrieve the truth and try to present it to the world. But the truth is not something that can be captured by what we have.

Artists are people who try to paint what is essentially not paintable. Writers are artists who try to say with words what cannot be said in words — in the same way that Laozi tries to use words to tell you what the way is, even though he explicitly said the way itself cannot be captured by words. That’s how all of us have to deal with it.

Jordan Schneider: You write that Laozi wrote this way because he wanted to emphasize that language is ultimately a misleading guide — “We think that when something is nameable, it is real. But he writes, ’The name that can be spoken is not the name that endures.’ Conversely, we think what cannot be spoken about does not exist. But the most important knowledge is never reducible to words.”

So when we’re all living in our AI-generated virtual reality video games — brought to you by, hopefully not slaves living in the Golden Triangle — we should remember to pick up our Chinese philosophers every once in a while, as well as Ken’s new book, All That We See or Seem. Ken Liu, this was just the biggest treat in the world.

To receive new posts and support our work, subscribe!