| You give Cursor a real task and watch it work… from memory.

You just spent 20 minutes and 1k tokens watching it iterate on something that already has a perfect answer somewhere online. The frustrating part isn’t that Claude is bad.

The expertise exists. The routing doesn’t. What I built: upskill (free)upskill = routing layer for skills Install it once, add one line to your agent config ( Before every non-trivial task → your agent runs Instead of guessing, it pulls a vetted playbook and follows it. What changes?Same prompt: “design a landing page” Same prompt: “add Clerk auth” Think of it as:

Under the hood

→ Pure vectors miss specifics Auth-aware ranking (optional)If env vars exist locally:

Only variable names are used. Values never leave your machine. SafetyEvery skill goes through LLM adversarial review at index time:

Out of 10k+ skills:

A few false positives (being tuned):

PrivacyDefault = locked down

Everything toggleable anytime. Not just for codeCovers workflows like:

If your agent is about to “wing it”… Try itIt’ll ask a few questions and wire itself into your agent. Repo: https://github.com/Autoloops/upskill [link] [comments] |

Upskill: skill registry your agent consults before it starts. 10k+ indexed, free, open source.

Reddit r/LocalLLaMA / 5/3/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- The article introduces “upskill,” a free, open-source skill registry that an agent consults before starting non-trivial tasks, so it can follow vetted playbooks instead of improvising.

- It claims that with a single installation and a configuration line (via “CLAUDE.md”), the agent runs “upskill find <task>” to retrieve appropriate workflows such as frontend landing-page design and Clerk authentication.

- Under the hood, upskill indexes 10k+ skills from multiple major vendors and communities, using a hybrid search approach combining Postgres full-text search and vector embeddings, then re-ranking results using community signals.

- It includes optional “auth-aware” ranking based on locally present environment variable names (values never leave the machine) to prioritize skills relevant to the user’s configured services.

- The project positions this as an “agent-layer mixture of experts,” aiming to improve reliability by routing tasks to existing expert workflows rather than relying solely on general prompting.

Related Articles

Black Hat USA

AI Business

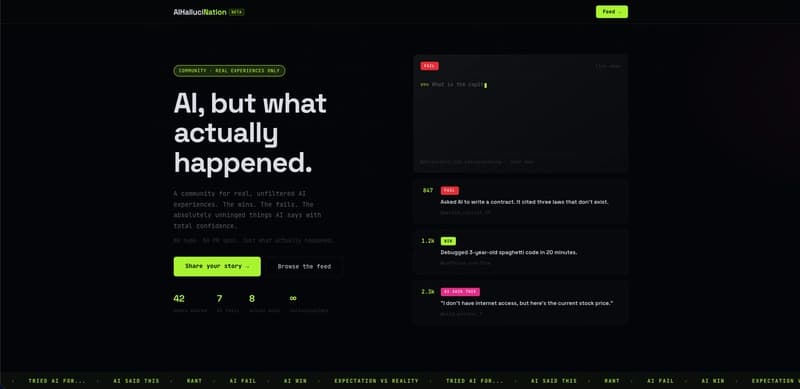

I used AI to moderate AI content — here's what I learned building AIHallucination

Dev.to

Stop Googling Prompts — Here's the Freelancer AI Toolkit That Actually Works

Dev.to

AI Powered Scheduling for Field Operations by Pablo M. Rivera

Dev.to

AI Deleted My Tests and Said 'All Tests Pass' — A Horror Story from Porting 'typia' from TypeScript to Go

Dev.to