Supersized and scaling: China pushes 10,000-card computing clusters in AI race

SCMP Tech / 5/6/2026

📰 NewsDeveloper Stack & InfrastructureIndustry & Market MovesModels & Research

Key Points

- China is rapidly expanding large-scale AI computing infrastructure, with cities and major tech firms competing to build 10,000-card GPU/accelerator clusters.

- These supersized clusters connect 10,000 or more AI accelerator chips, which proponents say speeds up iteration and significantly shortens model training cycles.

- The push is driven by expectations that greater compute scale will reduce costs and enable broader, faster adoption of AI capabilities.

- Domestic “champions,” including companies like Huawei and Alibaba, are positioned as key players in the infrastructure arms race.

In China, computing facilities have emerged as a new form of infrastructure over the past two years, sparking an arms race among cities and technology companies to build 10,000-card computing clusters.

These clusters – which link 10,000 or more artificial intelligence accelerator chips – enable faster iteration of AI capabilities and significantly reduce model training times.

Domestic champions, from tech giants such as Huawei Technologies and Alibaba Group Holding to graphics processing unit...

Continue reading this article on the original site.

Read original →Related Articles

Black Hat USA

AI Business

Transform Your Blurry Photos into HD Masterpieces, Instantly!

Dev.to

6 New Moats for AI Agent Infrastructure — Trust Score, Deployment, SLA, Identity, Compliance-as-Code

Dev.to

Google Home’s Gemini AI can handle more complicated requests

The Verge

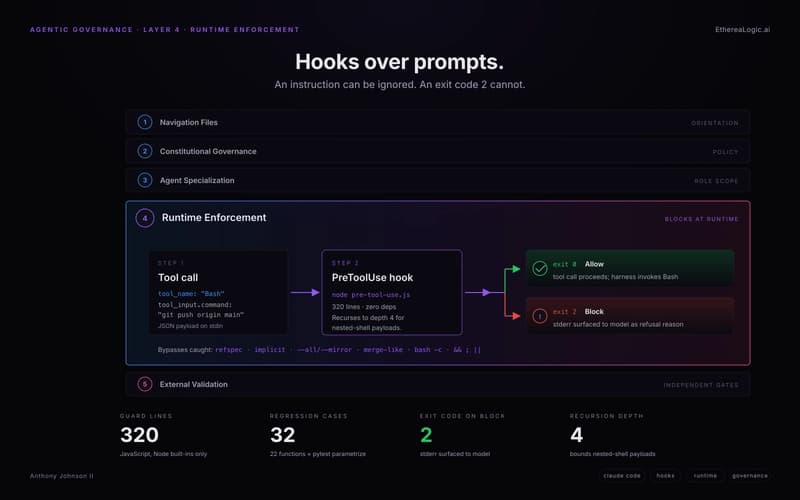

Exit Code 2: How Claude Hooks Turn Agentic Rules Into Runtime Barriers

Dev.to