D-QRELO: Training- and Data-Free Delta Compression for Large Language Models via Quantization and Residual Low-Rank Approximation

arXiv cs.LG / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper addresses the memory overhead caused by distributing many supervised fine-tuned (SFT) variants of the same large language model by using delta compression that stores only compressed delta weights.

- It argues that existing delta compression methods degrade for large-scale SFT data, because increasing data scale enlarges delta parameter magnitudes and related spectral/entropy measures, leading to larger compression errors.

- The authors propose DQRELO, a training- and data-free approach that first applies coarse one-bit quantization to model the dominant delta structure and then reconstructs finer details via compensated residual low-rank approximation.

- Experiments across multiple LLMs (including dense and mixture-of-experts architectures) and across domains show DQRELO outperforms prior methods under the difficult large-delta setting.

- The study also derives practical design principles indicating how task difficulty, model architecture, and layer location produce predictable compression patterns that can inform production deployment strategies.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

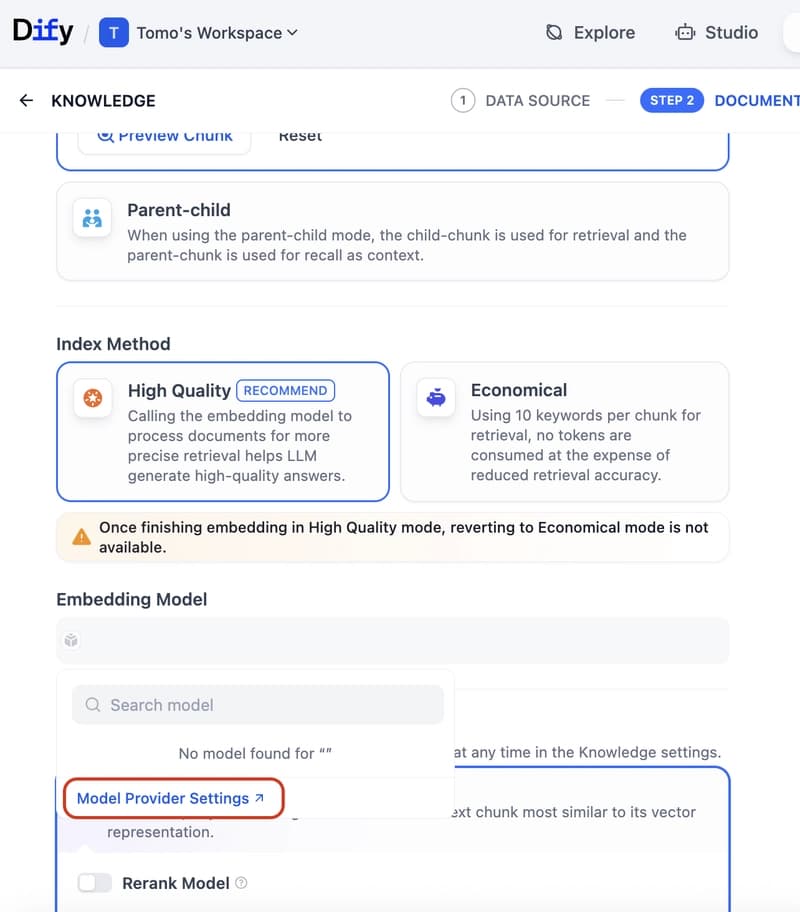

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to