| This is a follow up to this. Check the README for setup instructions: https://github.com/BigStationW/Local-MCP-server/blob/main/docs/local_gutenberg_books.md [link] [comments] |

Let your LLM browse books locally so that it can write better stories.

Reddit r/LocalLLaMA / 4/21/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical Usage

Key Points

- A Reddit post argues that letting an LLM browse books stored locally can improve how it writes stories.

- The post points readers to setup documentation for a “Local-MCP-server” approach, specifically for browsing local Gutenberg books.

- It references a README with step-by-step instructions, indicating a practical implementation path rather than a purely theoretical discussion.

- The update is framed as a follow-up to an earlier discussion in the same community focused on local LLM experiences.

Related Articles

Black Hat USA

AI Business

Capsule Security Emerges From Stealth With $7 Million in Funding

Dev.to

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

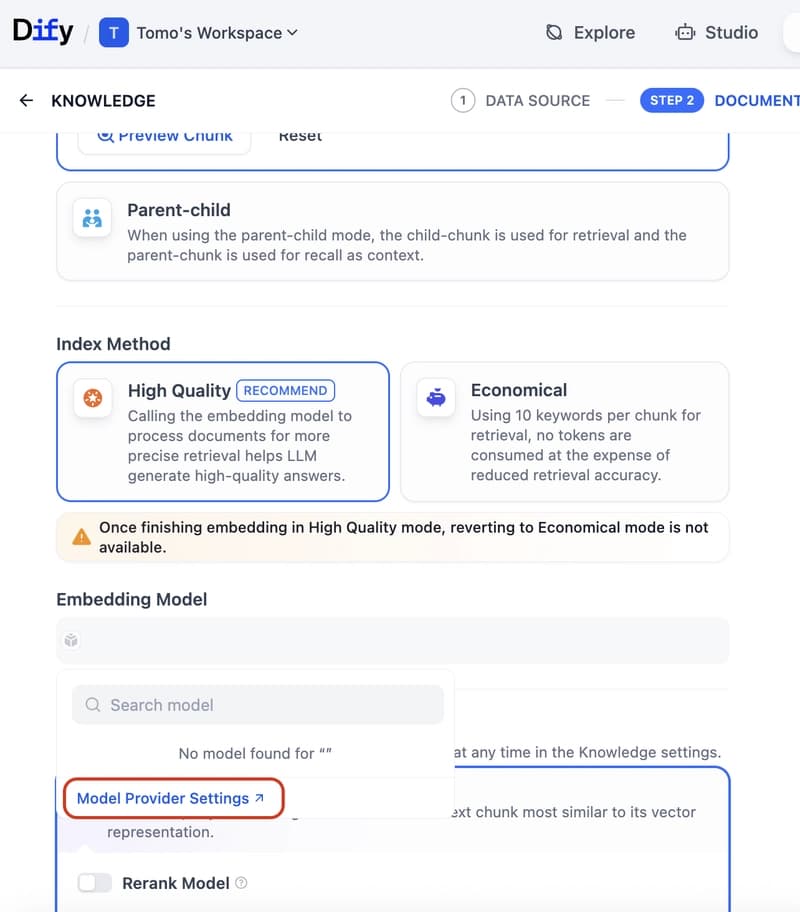

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to