Enhanced Privacy and Communication Efficiency in Non-IID Federated Learning with Adaptive Quantization and Differential Privacy

arXiv cs.CV / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper tackles two core problems in non-IID federated learning: communication overhead from heterogeneous device connectivity and privacy leakage risks from model/gradient analysis.

- It combines differential privacy with adaptive quantization, using Laplacian-based DP in particular to provide privacy guarantees and addressing a comparatively underexplored DP choice in FL.

- The authors introduce both a global bit-length scheduler (round-based cosine annealing) and a client-based scheduler that adapts bit-length according to each client’s estimated contribution via dataset entropy.

- Experiments on CIFAR-10, MNIST, and medical imaging datasets under non-IID settings show substantial reductions in total communicated data (up to 52.64% on MNIST and 45.06% on CIFAR-10) while keeping accuracy competitive and maintaining differential-privacy protections.

Related Articles

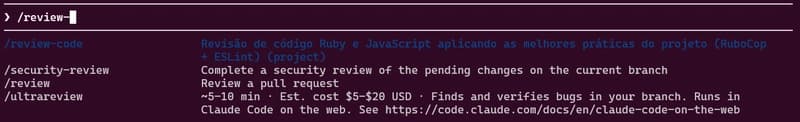

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to