Learnable Instance Attention Filtering for Adaptive Detector Distillation

arXiv cs.CV / 3/30/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper proposes LIAF-KD, a framework for adaptive detector knowledge distillation that accounts for instance-level variability rather than treating all object instances uniformly during spatial/feature filtering.

- Instead of using heuristic or teacher-driven attention filters, LIAF-KD introduces learnable instance selectors that dynamically reweight instance importance, with the student actively contributing based on its evolving learning state.

- Experiments on KITTI and COCO show consistent performance gains, including a reported ~2% improvement on a GFL ResNet-50 student without added complexity.

- The results indicate the approach can outperform existing state-of-the-art distillation methods for detector efficiency and accuracy tradeoffs.

Related Articles

Black Hat Asia

AI Business

Freedom and Constraints of Autonomous Agents — Self-Modification, Trust Boundaries, and Emergent Gameplay

Dev.to

The Prompt Tax: Why Every AI Feature Costs More Than You Think

Dev.to

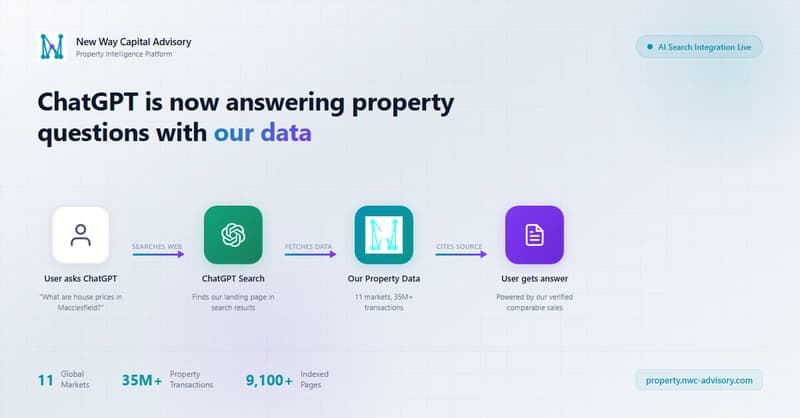

We caught ChatGPT answering property questions with our data -- here's the nginx log proof

Dev.to

[D] Joined UdeM MSCS without MILA affiliation - anyone successfully found a core MILA supervisor in their first semester?

Reddit r/MachineLearning