CLAD: Efficient Log Anomaly Detection Directly on Compressed Representations

arXiv cs.LG / 4/15/2026

📰 NewsDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces CLAD, a deep learning framework for log anomaly detection that operates directly on compressed log byte streams instead of requiring full decompression and parsing.

- It leverages the observation that normal logs produce regular byte patterns under compression, while anomalies introduce systematic multi-scale deviations.

- CLAD uses a purpose-built architecture combining a dilated convolutional byte encoder, a hybrid Transformer–mLSTM module, and four-way aggregation pooling to model these deviations from “opaque” compressed bytes.

- It employs a two-stage training approach—masked pre-training followed by focal-contrastive fine-tuning—to address severe class imbalance typical in anomaly detection.

- Across five datasets, CLAD achieves a state-of-the-art average F1-score of 0.9909, improving the best baseline by 2.72 percentage points while eliminating decompression/parsing overheads for streaming.

Related Articles

RAG in Practice — Part 4: Chunking, Retrieval, and the Decisions That Break RAG

Dev.to

Why dynamically routing multi-timescale advantages in PPO causes policy collapse (and a simple decoupled fix) [R]

Reddit r/MachineLearning

How AI Interview Assistants Are Changing Job Preparation in 2026

Dev.to

Claude Code's New Terminal Chat: Connect with Other Devs via P2P

Dev.to

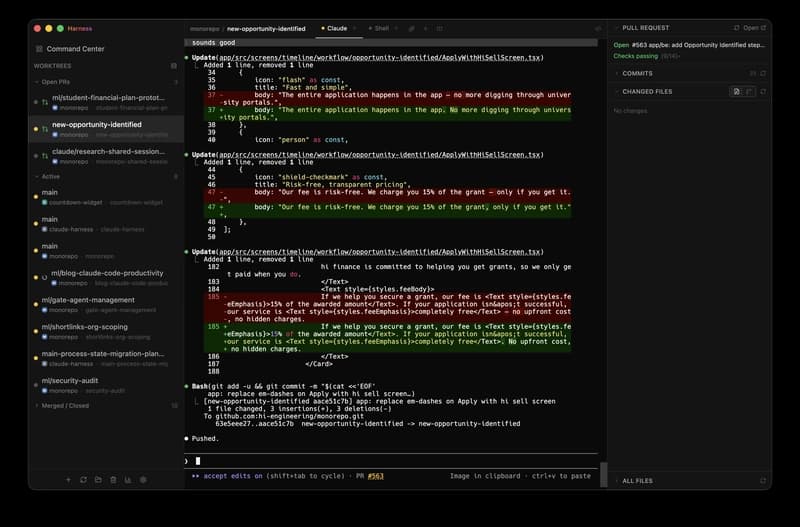

How to Manage Multiple Claude Code Sessions with Harness and Preview

Dev.to