Nonlinear Assimilation via Score-based Sequential Langevin Sampling

arXiv stat.ML / 4/7/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper proposes score-based sequential Langevin sampling (SSLS) as a new method for nonlinear data assimilation within a recursive Bayesian filtering setup.

- SSLS alternates prediction and update steps, using dynamic models for state prediction and score-based Langevin Monte Carlo to incorporate observations during updates.

- To handle sampling from highly non-log-concave posteriors, the authors add an annealing strategy to the update mechanism.

- They provide theoretical convergence guarantees in total variation distance and derive error bounds that analyze how performance depends on key hyperparameters.

- Numerical experiments in high-dimensional, strongly nonlinear, and sparse-observation settings show robust results and improved uncertainty quantification for reliable error calibration.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

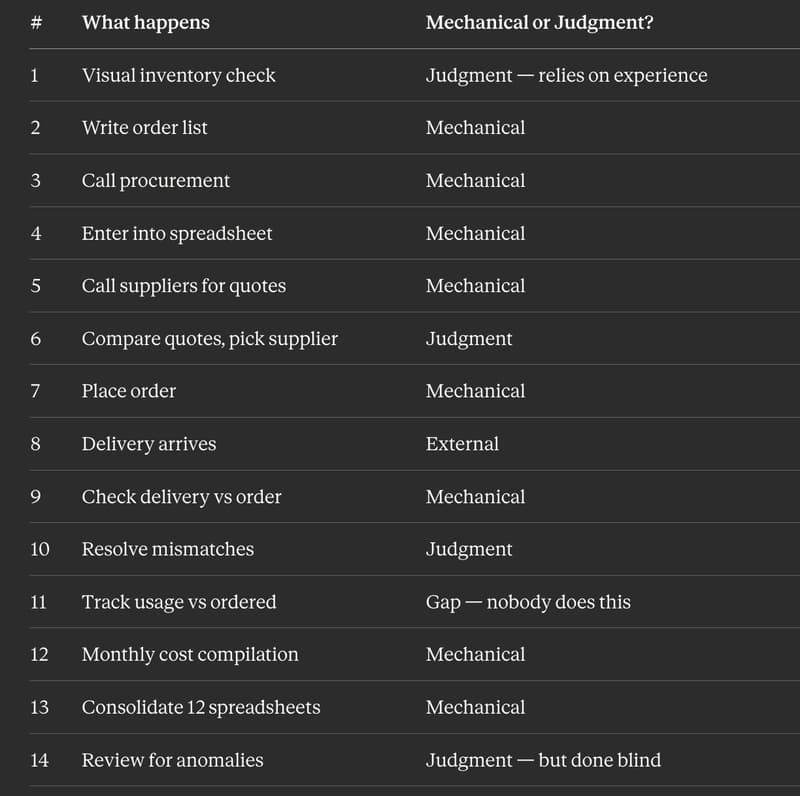

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to