[Bugfix] Zero-init MLA attention output buffers to prevent NaN from C…

v0.18.0rc2

vLLM Releases / 3/19/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical Usage

Key Points

- The article announces a release candidate v0.18.0rc2 for the vllm project, signaling ongoing development ahead of a stable release.

- It shows the page is hosted on GitHub under the vllm-project organization, indicating open-source collaboration.

- A sponsor widget on the page currently shows a loading error message: "Uh oh! There was an error while loading", highlighting a UI issue rather than functional changes.

- The content implies typical RC-stage expectations of new features, bug fixes, and performance improvements, though no specifics are provided in the excerpt.

Continue reading this article on the original site.

Read original →Related Articles

We asked 200 ChatGPT users their biggest frustration. All top 5 answers are problems ChatGPT Toolbox solves.

Reddit r/artificial

I Built an AI That Reviews Every PR for Security Bugs — Here's How (2026)

Dev.to

How I Built an AI SDR Agent That Finds Leads and Writes Personalized Cold Emails

Dev.to

Complete Guide: How To Make Money With Ai

Dev.to

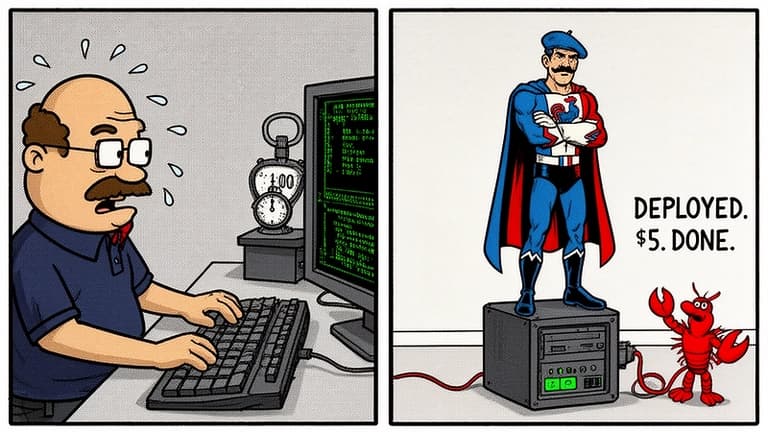

I Deployed My Own OpenClaw AI Agent in 4 Minutes — It Now Runs My Life From a $5 Server

Dev.to