One Supervisor, Many Modalities: Adaptive Tool Orchestration for Autonomous Queries

arXiv cs.CL / 3/13/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper proposes a central Supervisor architecture for autonomous multimodal query processing that coordinates specialized tools across text, image, audio, video, and document modalities.

- It introduces RouteLLM for learned routing of text queries and SLM-assisted modality decomposition for non-text paths to dynamically assign subtasks to appropriate tools.

- Evaluation on 2,847 queries across 15 task categories shows a 72% reduction in time-to-accurate-answer, an 85% reduction in conversational rework, and a 67% reduction in cost compared with a matched hierarchical baseline.

- The results indicate that centralized orchestration can substantially improve multimodal AI deployment economics while preserving accuracy parity.

Related Articles

I Built an AI That Reviews Every PR for Security Bugs — Here's How (2026)

Dev.to

[R] Combining Identity Anchors + Permission Hierarchies achieves 100% refusal in abliterated LLMs — system prompt only, no fine-tuning

Reddit r/MachineLearning

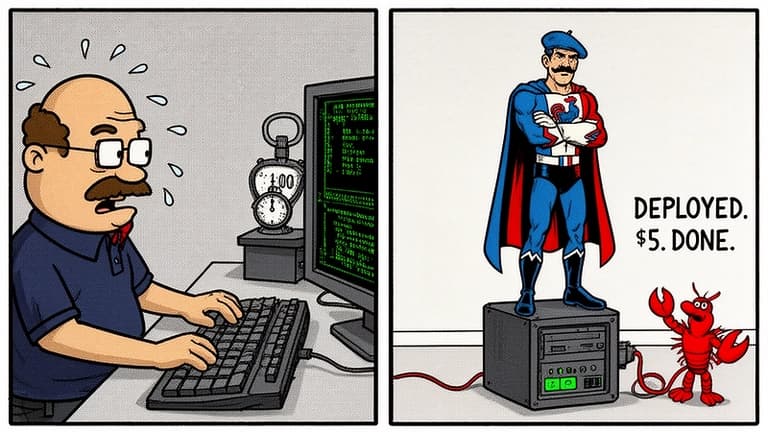

I Deployed My Own OpenClaw AI Agent in 4 Minutes — It Now Runs My Life From a $5 Server

Dev.to

I Analyzed My Portfolio with AI and Scored 53/100 — Here's How I Fixed It to 85+

Dev.to

How BAML Brings Engineering Discipline to LLM-Powered Systems

Dev.to