Stability and Generalization for Decentralized Markov SGD

arXiv cs.LG / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper studies the stability and generalization of decentralized SGD and SGDA when training data are generated by Markov-chain-dependent sampling rather than independent samples.

- It uses a stability-based analytical framework to explain how Markovian dependence and decentralized communication jointly affect generalization.

- The authors derive non-asymptotic generalization bounds that incorporate network topology, Markov chain mixing properties, and the primal-dual dynamics in the optimization process.

- Results extend existing theory for Markov stochastic gradient methods to both decentralized learning and minimax (saddle-point) settings.

- The work specifically addresses analytical challenges arising from correlated data streams and decentralized optimization, providing tools to predict generalization behavior in such systems.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

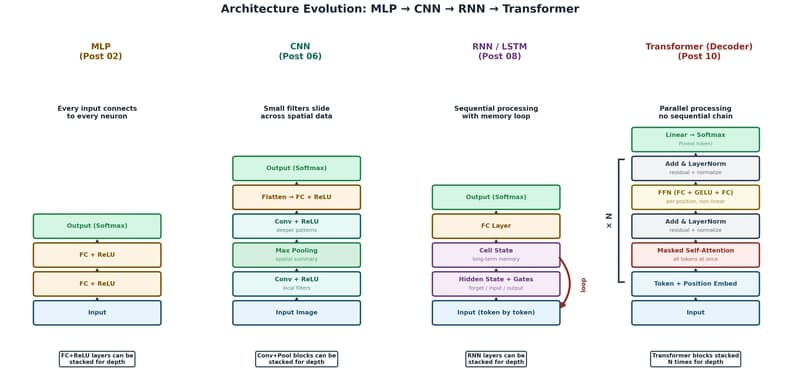

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to