ShobdoSetu: A Data-Centric Framework for Bengali Long-Form Speech Recognition and Speaker Diarization

arXiv cs.CL / 3/23/2026

📰 NewsIdeas & Deep AnalysisTools & Practical UsageModels & Research

Key Points

- ShobdoSetu presents a data-centric framework for Bengali long-form automatic speech recognition and speaker diarization, addressing the language's under-resourced status.

- The approach builds a high-quality training corpus from Bengali YouTube audiobooks and dramas, incorporating LLM-assisted language normalization, fuzzy-matching-based chunk boundary validation, and muffled-zone augmentation.

- The authors fine-tune the tugstugi/whisper-medium model on ~21,000 data points with beam size 5, achieving a WER of 16.751 on the public leaderboard and 15.551 on the private test set.

- For diarization, they fine-tune the pyannote.audio segmentation model in an extreme low-resource setting (10 training files), achieving a DER of 0.19974 on the public leaderboard and 0.26723 on the private test set.

- The results suggest that careful data engineering and domain-adaptive fine-tuning can yield competitive Bengali speech processing performance without relying on large annotated corpora.

Related Articles

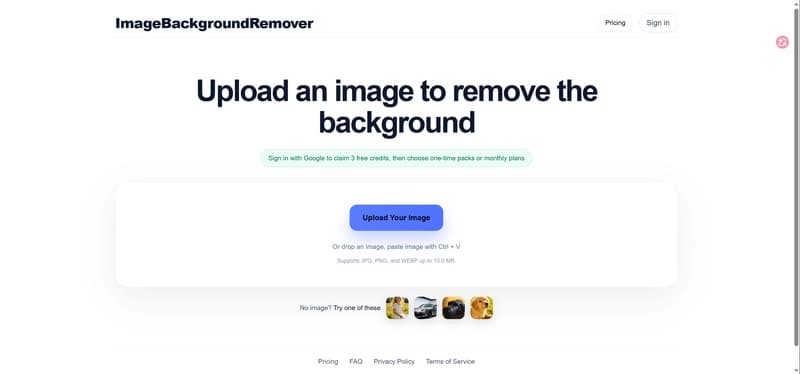

I built an online background remover and learned a lot from launching it

Dev.to

How AI is Transforming Dynamics 365 Business Central

Dev.to

Algorithmic Gaslighting: A Formal Legal Template to Fight AI Safety Pivots That Cause Psychological Harm

Reddit r/artificial

Do I need different approaches for different types of business information errors?

Dev.to

ShieldCortex: What We Learned Protecting AI Agent Memory

Dev.to