Hi r/LocalLLaMA! I work at Reka and organized our AMA last month. Some of y'all have asked for llama.cpp support - this is a follow-up to let you know that Reka Edge 2603 is now supported upstream in llama.cpp.

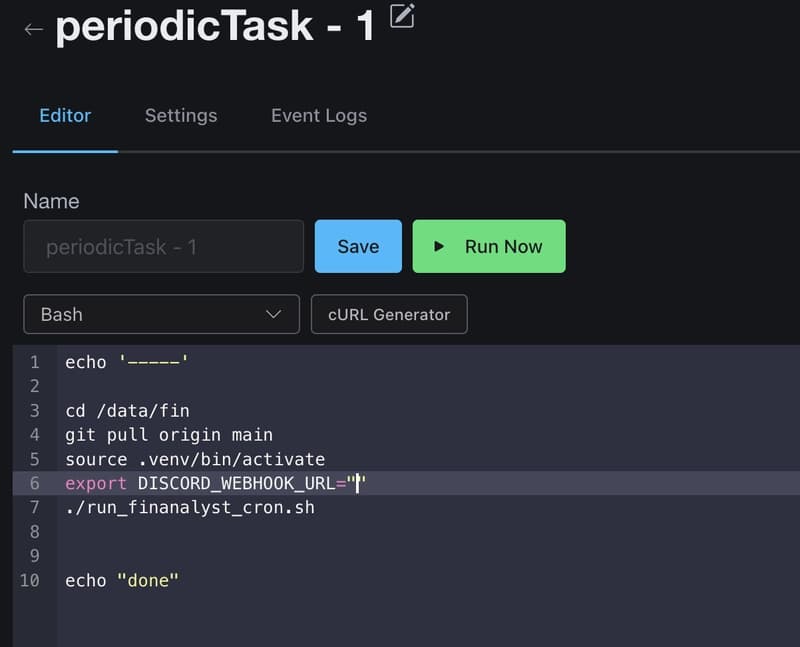

To get started:

- Use the Reka Edge 2603 weights from the HF repo

- Run the GGUF conversion script from the llama.cpp repo root

- (optional) Use the quantization script for the text decoder

One note: the model does not currently support reasoning, so run llama-server with `--reasoning off`. Happy hacking!

[link] [comments]