How to Approximate Inference with Subtractive Mixture Models

arXiv cs.LG / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- Subtractive Mixture Models (SMMs) extend classical mixture models by allowing negative coefficients, offering potentially greater expressiveness for approximate inference.

- The paper tackles a key obstacle: SMMs lack latent-variable semantics, which prevents directly reusing the sampling methods commonly used for classical mixture models in variational inference (VI) and importance sampling (IS).

- It proposes multiple expectation estimators for IS and learning schemes for VI that enable practical use of SMMs despite the missing semantics.

- The authors empirically evaluate these methods for distribution approximation and highlight added issues related to estimation stability and learning efficiency.

- They also discuss strategies to mitigate those challenges and provide code via the referenced GitHub repository.

Related Articles

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

Building a website with Replit and Vercel

Dev.to

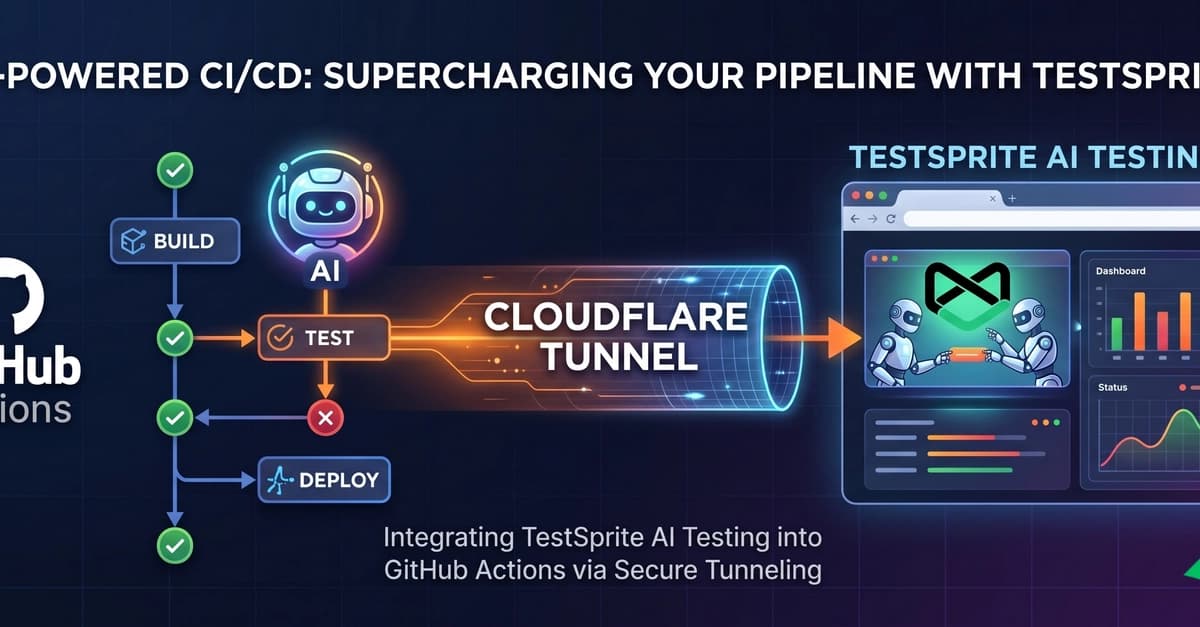

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

Claude and I aren't vibing at all

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to