How to Count AIs: Individuation and Liability for AI Agents

arXiv cs.AI / 3/12/2026

💬 OpinionIdeas & Deep Analysis

Key Points

- The article argues that as AI agents proliferate, identifying which AI caused a given action is essential for accountability but remains difficult because AIs can copy, split, merge, swarm, or vanish, often operating as ensembles of models.

- It distinguishes between thin identification (linking each AI action to a human principal) and thick identification (distinguishing AI agents as persistent units with coherent goals) as foundational for liability.

- It proposes the Algorithmic Corporation or A-corp, a legal fiction that can own property, enter contracts, and sue or be sued, and is owned by humans but run by AIs.

- A-corp is designed to resolve thin and thick identity issues by tying AI actions to owners and shaping emergent self-organization, incentivizing goal alignment.

- The paper argues that in equilibrium A-corps would self-organize into persistent, legally legible entities that respond to liability laws, potentially reshaping accountability for AI actions.

Related Articles

MCP Is Quietly Replacing APIs — And Most Developers Haven't Noticed Yet

Dev.to

I Built a Self-Healing AI Trading Bot That Learns From Every Failure

Dev.to

Stop Guessing Your API Costs: Track LLM Tokens in Real Time

Dev.to

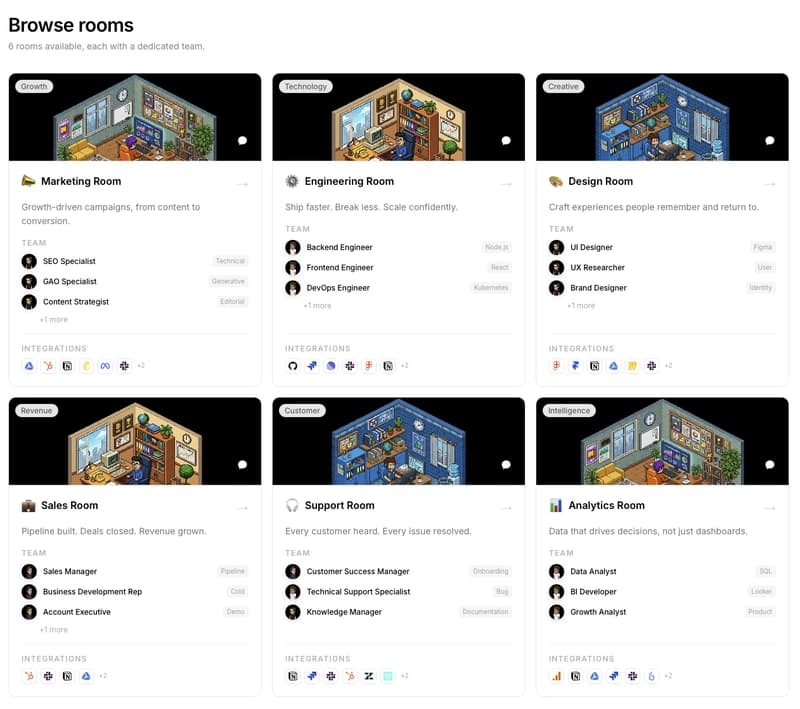

We are building PixelRooms! The marketplace of AI teams for thepixeloffice.ai

Dev.to

Every real estate agent tool worth your time in 2026, ranked and rated

Dev.to