Efficient Agent Evaluation via Diversity-Guided User Simulation

arXiv cs.AI / 4/25/2026

📰 NewsModels & Research

Key Points

- The paper argues that evaluating LLM-based customer agents is difficult because multi-turn, stochastic interactions require exploring many possible conversations.

- It points out that existing linear Monte Carlo rollouts are computationally inefficient, since they repeatedly regenerate the same early prefixes and may miss rare but important user behaviors.

- The authors introduce DIVERT, a snapshot-based, coverage-guided user simulation framework that saves full agent-environment state at key decision points and resumes from those snapshots.

- DIVERT branches from “junctions” using diversity-inducing user responses to systematically explore alternative interaction trajectories, improving both efficiency and coverage.

- Experiments indicate DIVERT finds more failures per token than standard rollout methods and identifies them across a broader set of tasks.

Related Articles

Underwhelming or underrated? DeepSeek V4 shows “impressive” gains

SCMP Tech

Debugging AI Agents in Production: ADK+Gemini Cloud Assist | Google Cloud NEXT '26

Dev.to

🤖 Learn Harness Engineering by Building a Mini Openclaw 🦞

Dev.to

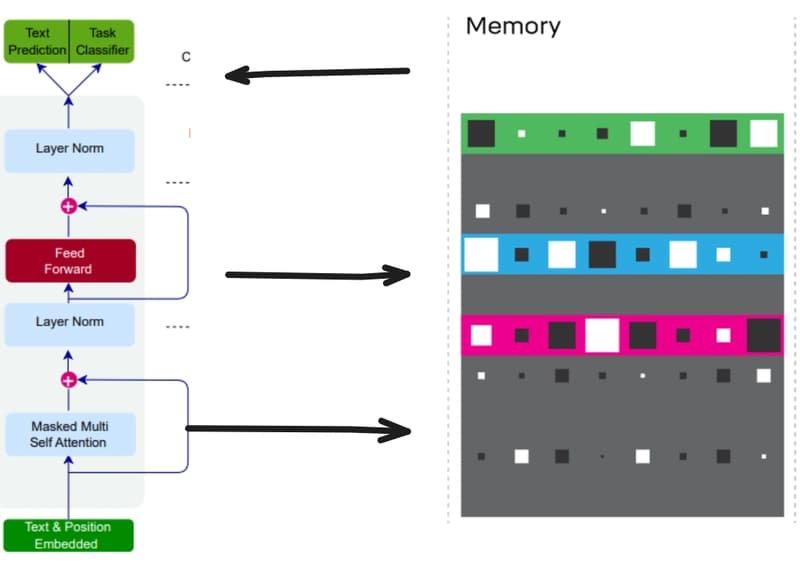

Teaching Small Language Models to Remember: Giving LLMs a Notebook with Differentiable Neural Computers

Dev.to

![Training LFM-2.5-350M on Reddit post summarization with GRPO on my 3x Mac Minis — final evals and t-test evals are here [P]](/_next/image?url=https%3A%2F%2Fpreview.redd.it%2Fzynqkm0osaxg1.png%3Fwidth%3D140%26height%3D76%26auto%3Dwebp%26s%3De827ef782e46b56a11f263b7689811da72904ba9&w=3840&q=75)

Training LFM-2.5-350M on Reddit post summarization with GRPO on my 3x Mac Minis — final evals and t-test evals are here [P]

Reddit r/MachineLearning