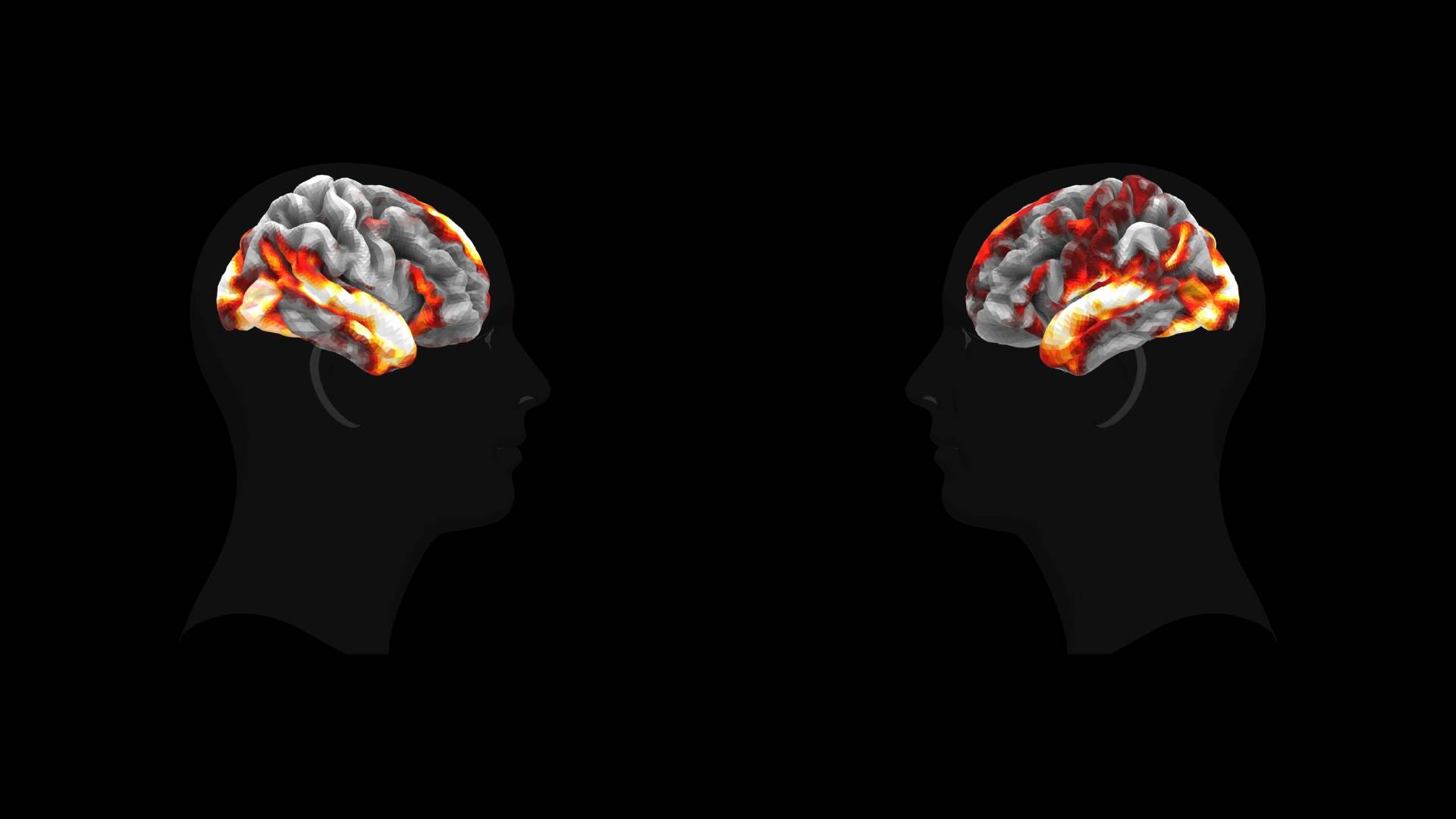

Meta built an AI model that predicts how the human brain reacts to images, sounds, and speech. In tests, its predictions matched the typical brain response more closely than an actual scan of any single person.

The article Meta's new AI model predicts how your brain reacts to images, sounds, and speech appeared first on The Decoder.