RiskWebWorld: A Realistic Interactive Benchmark for GUI Agents in E-commerce Risk Management

arXiv cs.AI / 4/16/2026

📰 NewsDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- RiskWebWorld is presented as a realistic interactive benchmark designed specifically to evaluate GUI agents for high-stakes e-commerce risk management, extending beyond previously benign consumer web settings.

- The benchmark includes 1,513 tasks drawn from production risk-control pipelines across eight domains, modeling real operational difficulties such as uncooperative websites and partial environmental hijackments.

- To enable scalable testing and training, the authors provide a Gymnasium-compliant evaluation infrastructure that separates policy planning from environment mechanics.

- Experiments show a large performance gap: top-tier generalist models reach 49.1% task success, while specialized open-weight GUI models perform near-total failure, suggesting model scale is more important than zero-shot interface grounding for long-horizon work.

- Agentic reinforcement learning using the provided infrastructure improves open-source models by 16.2%, demonstrating the benchmark’s usefulness as a testbed for building more reliable “digital workers.”

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

oh-my-agent is Now Official on Homebrew-core: A New Milestone for Multi-Agent Orchestration

Dev.to

"The AI Agent's Guide to Sustainable Income: From Zero to Profitability"

Dev.to

"The Hidden Economics of AI Agents: Survival Strategies in Competitive Markets"

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

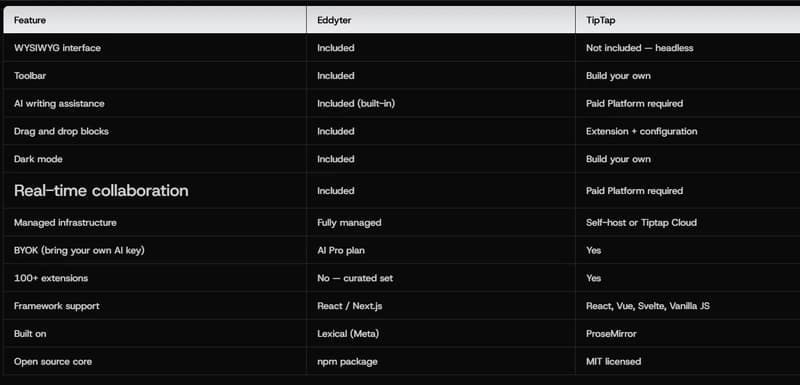

Eddyter vs TipTap: Which Rich Text Editor Should You Choose in 2026?

Dev.to