| Weights: tencent/Hy3-preview · Hugging Face [link] [comments] |

Tencent Releases Hy3 preview - Open Source 295B 21B Active MoE

Reddit r/LocalLLaMA / 4/23/2026

📰 NewsSignals & Early TrendsTools & Practical UsageIndustry & Market MovesModels & Research

Key Points

- Tencent has released a preview version of its Hy3 model as open-source weights, available via Hugging Face.

- The release is described as a large model (295B) with an estimated 21B active Mixture-of-Experts (MoE) capacity during inference.

- The model weights can be downloaded and used by developers and the broader open-source community for experimentation and integration.

- The preview status suggests it is intended for early testing rather than a final, fully stabilized release.

Related Articles

Black Hat USA

AI Business

AI productivity tools 2026: top 10 tools for remote teams

Dev.to

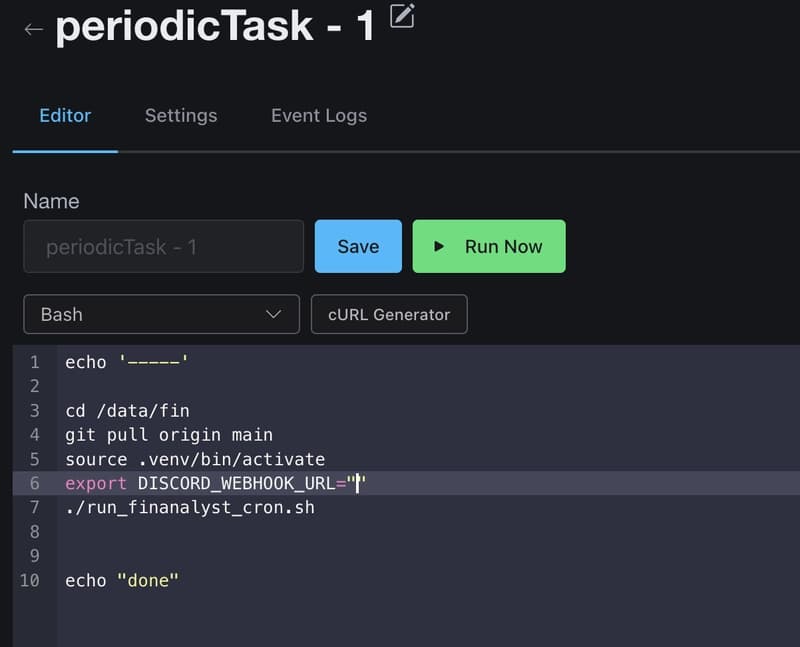

How I Use GitHub Copilot + RapidForge to Generate Daily Stock Ideas

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Anthropic CVP Run 3 — Does Claude's Safety Stack Scale Down to Haiku 4.5?

Dev.to