Robust LLM Performance Certification via Constrained Maximum Likelihood Estimation

arXiv cs.CL / 4/7/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper addresses the challenge of certifying LLM failure rates when human-labeled “gold” data is expensive and automatically labeled data (e.g., LLM-as-a-Judge) can be biased.

- It proposes a constrained maximum-likelihood estimation framework that fuses three inputs: a small high-quality human calibration set, a large set of judge annotations, and domain-specific constraints based on known bounds of judge performance statistics.

- Empirical results across multiple experimental conditions (judge accuracy, calibration size, and underlying LLM failure rate) show the constrained MLE approach achieves higher accuracy and lower variance than prior baselines such as Prediction-Powered Inference (PPI).

- The authors emphasize that the method replaces “black-box” judge usage with a more interpretable, scalable, and principled pathway for LLM failure-rate certification.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Black Hat Asia

AI Business

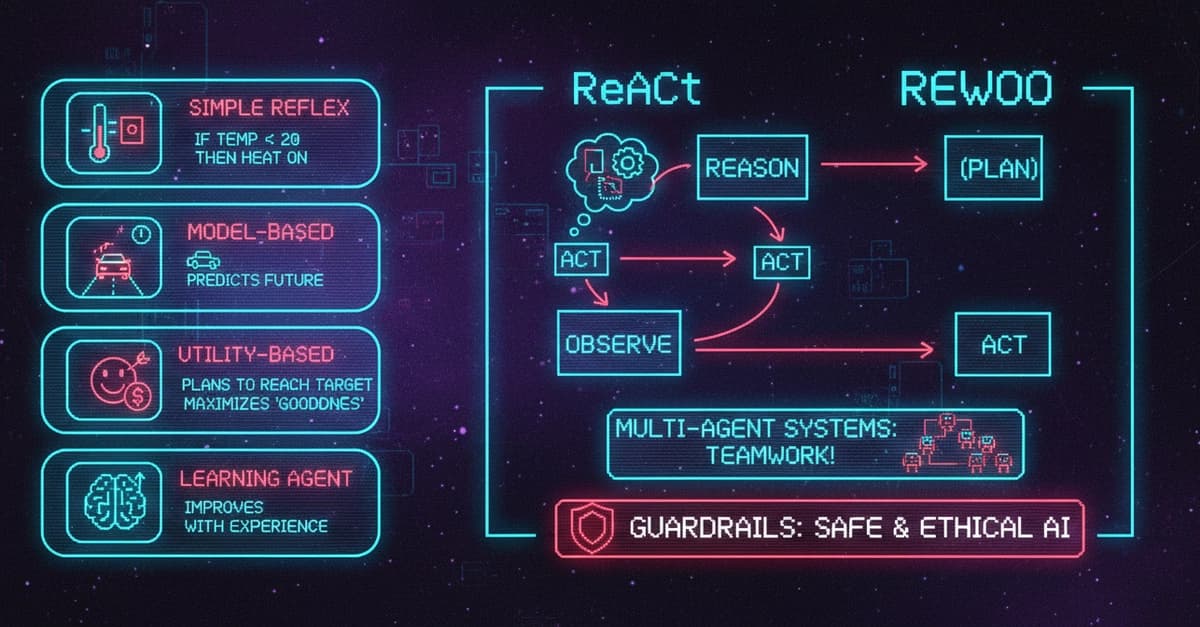

AI Agents Explained: 5 Types, Components, Frameworks, and Real-World Use Cases

Dev.to

Edge-to-Cloud Swarm Coordination for circular manufacturing supply chains with embodied agent feedback loops

Dev.to

Why QIS Is Not a Sync Problem: The Mailbox Model for Distributed Intelligence

Dev.to

The Ethics of AI: A Developer's Responsibility

Dev.to