SpikeMLLM: Spike-based Multimodal Large Language Models via Modality-Specific Temporal Scales and Temporal Compression

arXiv cs.AI / 4/22/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- SpikeMLLM is a new spike-based framework for Multimodal Large Language Models (MLLMs) aimed at reducing inference compute and energy use in resource-constrained settings.

- It addresses key spiking challenges for multimodality by using Modality-Specific Temporal Scales (MSTS) guided by Modality Evolution Discrepancy (MED), instead of relying on uniform spike encoding.

- The method introduces Temporally Compressed LIF (TC-LIF) to compress timesteps from T=L-1 down to T=log2(L)-1, cutting the high overhead from unfolding long-resolution image inputs.

- Experiments across four MLLMs and multiple multimodal benchmarks show near-lossless accuracy even under aggressive timestep compression (Tv/Tt=3/4), with small performance gaps versus FP16 baselines.

- A dedicated RTL accelerator designed for the spike datapath achieves 9.06x higher throughput and 25.8x better power efficiency than an FP16 GPU baseline in a co-design deployment setup.

Related Articles

How I Use GitHub Copilot + RapidForge to Generate Daily Stock Ideas

Dev.to

Anthropic CVP Run 3 — Does Claude's Safety Stack Scale Down to Haiku 4.5?

Dev.to

Mend.io Releases AI Security Governance Framework Covering Asset Inventory, Risk Tiering, AI Supply Chain Security, and Maturity Model

MarkTechPost

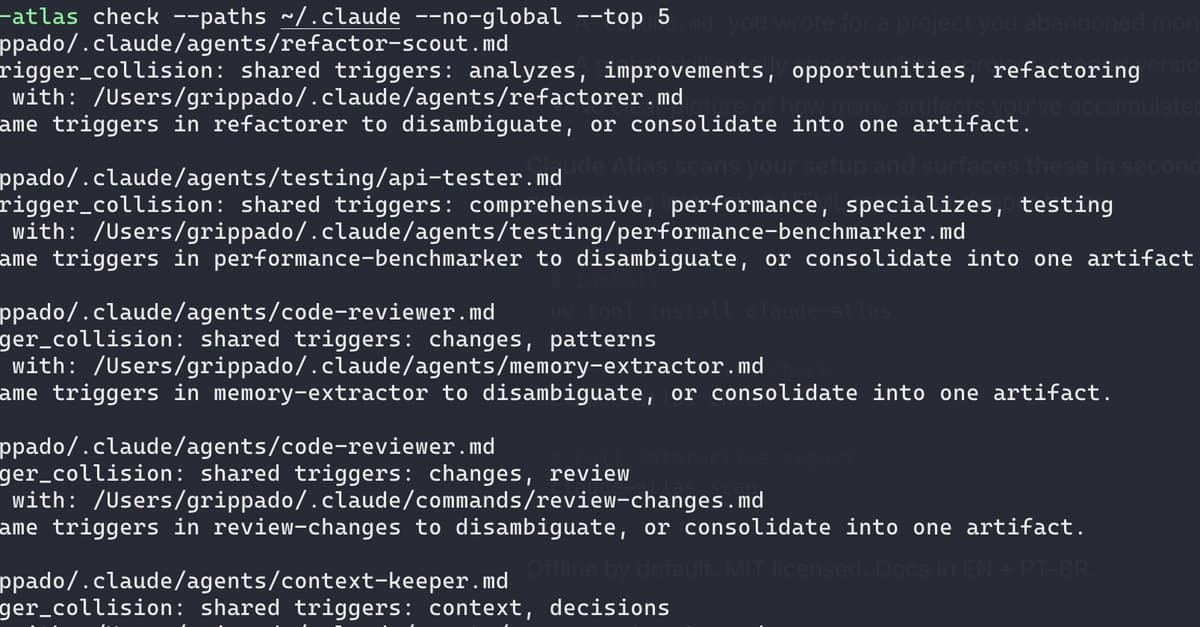

I audited my own Claude Code setup and found 21 issues in 72 artifacts

Dev.to

Design Patterns for Prompt Engineering: Toward a Formal Discipline

Dev.to