Open, Reliable, and Collective: A Community-Driven Framework for Tool-Using AI Agents

arXiv cs.AI / 4/2/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- The paper argues that reliability issues in tool-using LLM agents come from both tool-invocation accuracy (agent deciding how/when to call tools) and intrinsic tool accuracy (the tools’ own correctness), noting prior research has focused more on the former.

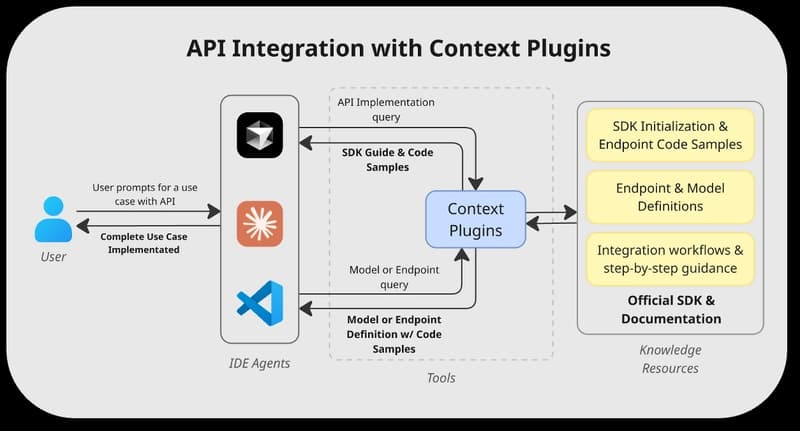

- It introduces OpenTools, a community-driven framework that standardizes tool schemas and offers plug-and-play wrappers to make tools easier to integrate across agent architectures.

- OpenTools evaluates tools using automated test suites plus continuous monitoring, and publishes reliability reports that can evolve as tools change.

- The authors also release a public web demo with predefined agents and tools, allowing users to run tasks and contribute test cases to improve coverage and evaluation.

- Experiments reportedly show improved end-to-end reproducibility and task performance, with community-contributed task-specific tools yielding 6%-22% relative gains over an existing toolbox, reinforcing the role of intrinsic tool accuracy.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.