On the "Causality" Step in Policy Gradient Derivations: A Pedagogical Reconciliation of Full Return and Reward-to-Go

arXiv cs.AI / 4/7/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper analyzes the commonly cited “causality” step in policy gradient derivations, clarifying precisely why terms from the past portion of the trajectory disappear when moving from full return to reward-to-go.

- It provides an explicit mathematical derivation using prefix trajectory distributions and the score-function identity, rather than relying on a less rigorous heuristic explanation.

- The authors show that using reward-to-go does not alter the resulting REINFORCE-style estimator; the difference is purely in how the objective decomposition reveals the same estimator form.

- Conceptually, reward-to-go is derived directly from decomposing the learning objective over trajectory prefixes, with the standard causality argument emerging only as a corollary.

- Overall, the contribution is pedagogical: it improves rigor and intuition in introductory derivations of policy gradients without changing the underlying algorithm.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

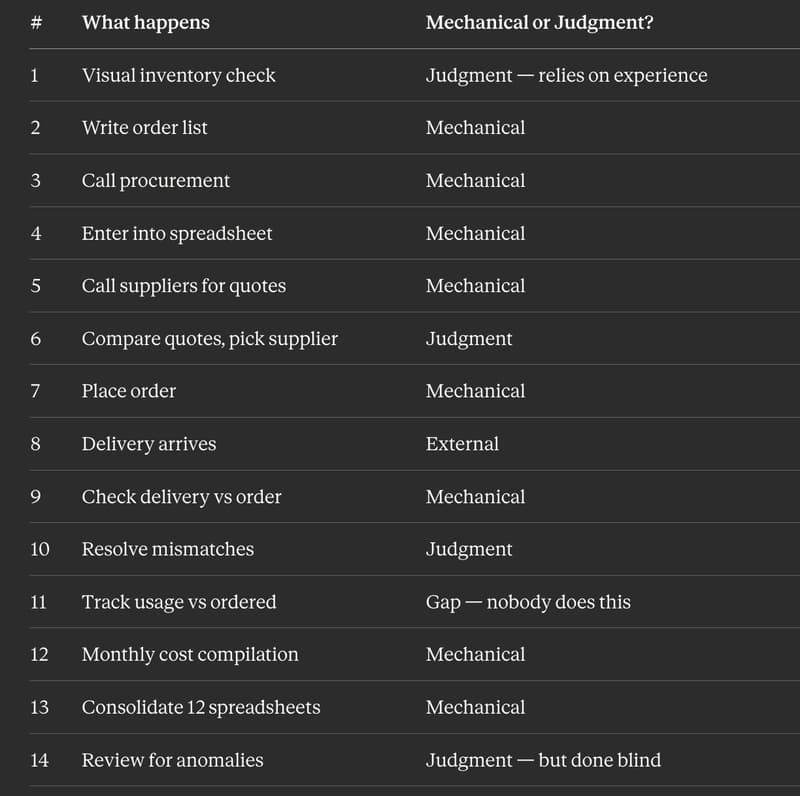

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to