| Hey guys! I hope this helps everyone. [link] [comments] |

An Overnight Stack for Qwen3.6–27B: 85 TPS, 125K Context, Vision — on One RTX 3090 | by Wasif Basharat | Apr, 2026

Reddit r/LocalLLaMA / 4/23/2026

💬 OpinionDeveloper Stack & InfrastructureSignals & Early TrendsTools & Practical Usage

Key Points

- The post shares an “overnight” setup for running Qwen 3.6–27B with reported performance of about 85 TPS.

- It targets a large 125K context length and includes vision-related capabilities alongside text.

- The entire stack is presented as deployable on a single consumer GPU, specifically an RTX 3090.

- The content is positioned as a practical guide for local LLM experimentation rather than a formal release or research paper.

- The goal is to help others reproduce the configuration efficiently using available local hardware.

Related Articles

Black Hat USA

AI Business

AI productivity tools 2026: top 10 tools for remote teams

Dev.to

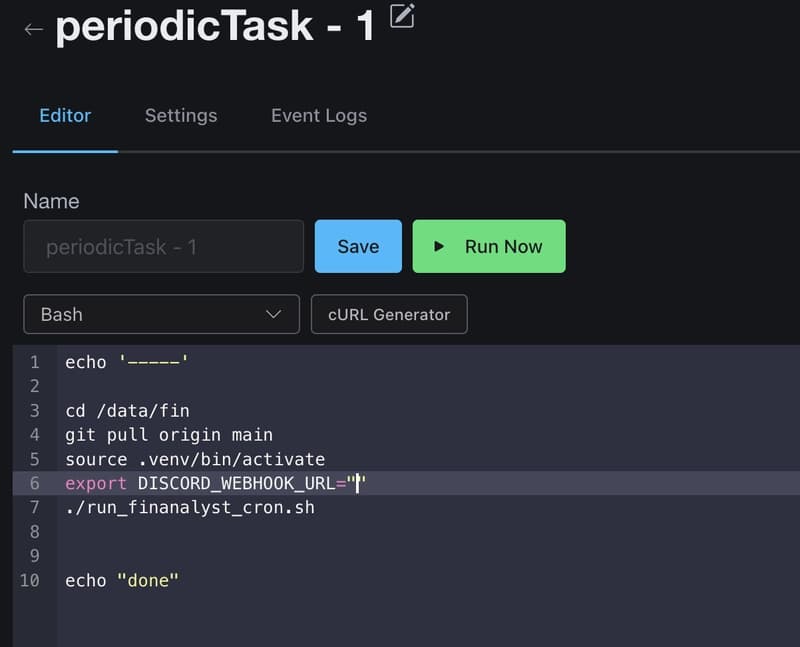

How I Use GitHub Copilot + RapidForge to Generate Daily Stock Ideas

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Anthropic CVP Run 3 — Does Claude's Safety Stack Scale Down to Haiku 4.5?

Dev.to