Hybrid Latents -- Geometry-Appearance-Aware Surfel Splatting

arXiv cs.CV / 4/17/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

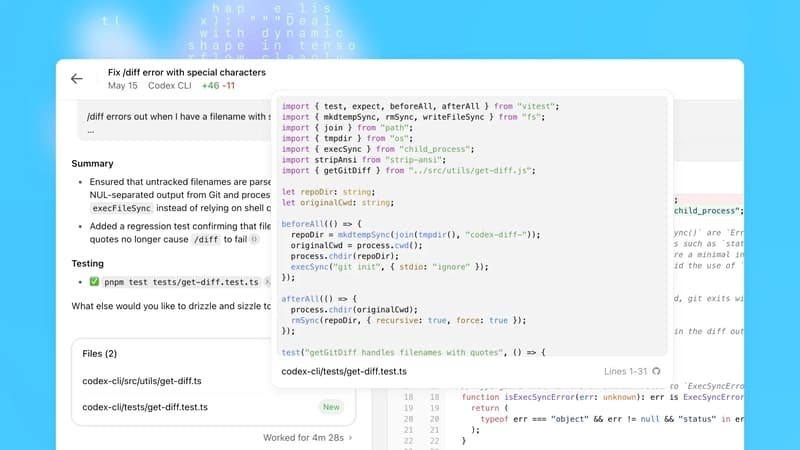

- The paper presents a hybrid radiance representation that combines Gaussian splatting with a hash-grid and adds per-Gaussian latent features to separate geometry from appearance more effectively than prior NeRF-style methods.

- By explicitly biasing the optimizer toward low- vs. high-frequency components and using hard opacity falloffs, the method reduces the chance that high-frequency textures will mask or compensate for geometry errors.

- It improves efficiency by pruning redundant Gaussians probabilistically and applying a sparsity-inducing BCE-based opacity loss to keep only a minimal set of primitives.

- Experiments on synthetic and real-world datasets show better reconstruction fidelity than state-of-the-art Gaussian-based novel-view synthesis, while using about an order of magnitude fewer Gaussians.

- Overall, the work aims to make 2D Gaussian scene reconstruction from multi-view images more accurate and computationally efficient through frequency-aware latent modeling and aggressive model compacting.

Related Articles

langchain-anthropic==1.4.1

LangChain Releases

Stop burning tokens on DOM noise: a Playwright MCP optimizer layer

Dev.to

Talk to Your Favorite Game Characters! Mantella Brings AI to Skyrim and Fallout 4 NPCs

Dev.to

OpenAI Codex Update Adds macOS Agent, Browser, Memory; 3M Weekly Users

Dev.to

How Data Science Is Used to Predict User BeReducing Human Error in Compliance With AI Technology havior

Dev.to