Attention Residuals

arXiv cs.CL / 3/17/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces Attention Residuals (AttnRes), replacing fixed unit-weight accumulation of layer outputs with softmax attention across preceding layer representations to enable input-dependent, selective aggregation.

- To address memory and communication costs in large-scale training, it proposes Block AttnRes, which partitions layers into blocks and attends over block-level representations.

- The authors show scaling-law evidence that AttnRes benefits persist across model sizes and demonstrate improved gradient and activation distributions when integrated into a 48B total / 3B activated Kimi Linear architecture trained on 1.4T tokens.

- Additional deployment considerations include cache-based pipeline communication and a two-phase computation strategy to make AttnRes a practical drop-in replacement with minimal overhead.

Related Articles

MCP Is Quietly Replacing APIs — And Most Developers Haven't Noticed Yet

Dev.to

I Built a Self-Healing AI Trading Bot That Learns From Every Failure

Dev.to

Stop Guessing Your API Costs: Track LLM Tokens in Real Time

Dev.to

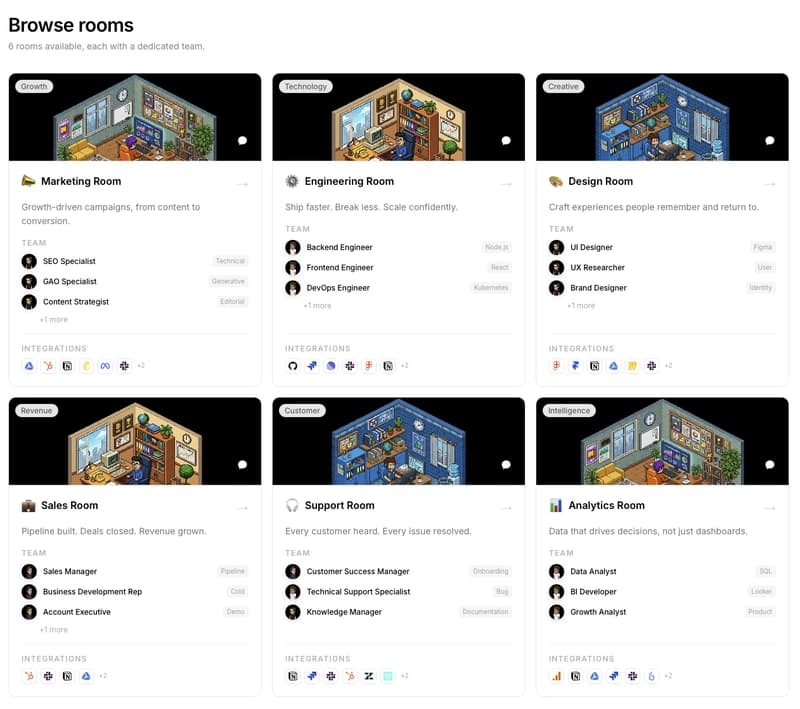

We are building PixelRooms! The marketplace of AI teams for thepixeloffice.ai

Dev.to

Every real estate agent tool worth your time in 2026, ranked and rated

Dev.to