AI Trust OS -- A Continuous Governance Framework for Autonomous AI Observability and Zero-Trust Compliance in Enterprise Environments

arXiv cs.AI / 4/7/2026

💬 OpinionDeveloper Stack & InfrastructureIdeas & Deep Analysis

Key Points

- The paper argues that enterprise governance for LLMs and multi-agent workflows is failing because organizations cannot govern systems they cannot continuously observe, especially with compliance approaches designed for deterministic web apps.

- It proposes “AI Trust OS,” a telemetry-driven, always-on governance architecture that continuously discovers AI systems, collects control assertions via automated probes, and synthesizes trust artifacts.

- The framework is built on four principles: proactive discovery, telemetry evidence instead of manual attestation, continuous posture rather than point-in-time audits, and architecture-backed proof rather than relying on policy documents.

- It uses a zero-trust telemetry boundary with ephemeral read-only probes to validate structural metadata while avoiding ingress of source code or payload-level PII.

- An “AI Observability Extractor Agent” is described to scan LangSmith and Datadog LLM telemetry, register previously undocumented AI systems, and provide a way to ground governance maturity evidence in empirical observability signals mapped to ISO 42001, the EU AI Act, SOC 2, GDPR, and HIPAA.

Related Articles

[R] The ECIH: Model Modeling Agentic Identity as an Emergent Relational State [R]

Reddit r/MachineLearning

Melhores Alternativas ao NightCafe em 2026: Acesso API, Recursos Empresariais, Menores Custos

Dev.to

Artificial Intelligence and Life in 2030: The One Hundred Year Study onArtificial Intelligence

Dev.to

Stop waiting for Java to rebuild! AI IDEs + Zero-Latency Hot Reload = Magic

Dev.to

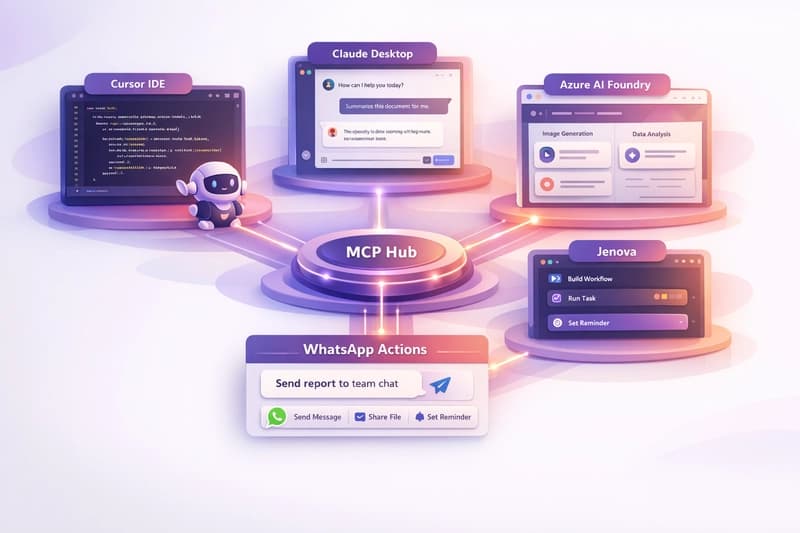

Whapi-mcp: 165 WhatsApp Tools for AI Agents (2026)

Dev.to