TiAb Review Plugin: A Browser-Based Tool for AI-Assisted Title and Abstract Screening

arXiv cs.AI / 4/13/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- TiAb Review Plugin is an open-source, browser-based (Chrome) tool that enables no-code, serverless AI-assisted screening of titles and abstracts for systematic reviews.

- The plugin uses Google Sheets as a shared database and supports multi-reviewer collaboration without a dedicated server, while requiring users to provide their own Gemini API key stored locally and encrypted.

- It offers three screening modes: manual review, LLM batch screening, and ML active learning, including an in-browser re-implementation of ASReview’s default active learning approach using TypeScript (TF-IDF + Naive Bayes).

- The ML component matched ASReview’s top-100 rankings exactly across six datasets, and the LLM configuration (Gemini 3.0 Flash with a low thinking budget and TopP=0.95) achieved 94–100% recall with 2–15% precision and strong workload savings at 95% recall.

- The study concludes the extension is functional and practical for integrating both LLM screening and ML active learning into a lightweight, collaborative workflow.

Related Articles

Black Hat USA

AI Business

Black Hat Asia

AI Business

I built the missing piece of the MCP ecosystem

Dev.to

Best AI Detectors in 2026: I Tested 30+ Popular AI Detectors to Find the Most Accurate Ones

Dev.to

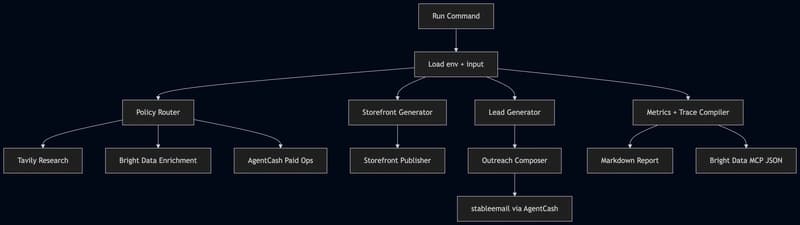

Building an Agentic Commerce Router with TypeScript, AgentCash, Bright Data, Tavily, OpenAI, and Featherless

Dev.to