![[New Model] micro-kiki-v3 — Qwen3.5-35B-A3B + 35 domain LoRAs + router + negotiator + Aeon memory for embedded engineering](https://external-preview.redd.it/9hbcv2sf_EOyQ633tWaZKS7osZsLnQc5moOVkzar05Q.png?width=640&crop=smart&auto=webp&s=796f7c70a8a6f32713f1d01e505ba2ef2fcc36c1) | Released today on HF. Built by L'Électron Rare (https://github.com/L-electron-Rare) — our local-first AI platform FineFab. The training toolkit went public the day before: https://github.com/L-electron-Rare/KIKI-Mac\_tunner (MLX for Mac Studio, distills Claude Opus into Mistral Large 123B). Full pipeline is open, not just the artifact. **Architecture** - Domain router → top-4 selection among 35 LoRA stacks - Base: Qwen3.5-35B-A3B (MoE, 256 experts, 3B active/token) - LoRA rank 16 on q/k/v/o, top-2 routing per stack - Null-space projection between stacks to mitigate catastrophic forgetting - Negotiator (CAMP + Catfish) arbitrates conflicting stack outputs - Anti-bias layer (KnowBias + RBD) before output - Aeon memory (Atlas graph + Trace log) for cross-session persistence **Specs** - GGUF Q4_K_M, llama.cpp / Ollama / LM Studio - Context 262K tokens - Apache 2.0 - French + English interleaved **35 domains** chat-fr, reasoning, python, typescript, cpp, rust, html-css, shell, sql, yaml-json, lua-upy, docker, devops, llm-orch, llm-ops, ml-training, kicad-dsl, kicad-pcb, spice, electronics, components, power, emc, dsp, embedded, stm32, iot, platformio, freecad, web-frontend, web-backend, music-audio, math, security **Dataset** — also released, Apache 2.0 489K instruction-following examples: - 50,116 real Claude CLI sessions from our 5-node P2P mesh during embedded consulting work (GrosMac M5, Tower 28t, CILS i7, KXKM-AI RTX 4090, VM) - 2,529 Codex/Copilot sessions - 364,045 from 19 filtered open HF datasets (CodeFeedback, French-Alpaca, Electronics StackExchange, stm32-hal-dataset, JITX components…) - Opus teacher distillation for chat-fr + reasoning - 32 original curated seed sets **Honest caveats** - No external reproducible benchmark yet. Internal held-out eval only. v4 roadmap. - Aeon memory needs external backends (Qdrant, Neo4j) for production. - Max 4 concurrent stacks; combos matter, some well-exercised, others less. - Solo/small team project, two weeks, consumer hardware. Not a lab release. Model: https://huggingface.co/clemsail/micro-kiki-v3 Dataset: https://huggingface.co/datasets/clemsail/micro-kiki-v3-dataset Training toolkit (MLX Mac Studio): https://github.com/L-electron-Rare/KIKI-Mac_tunner Ecosystem: https://github.com/L-electron-Rare Feedback, forks, negative benchmarks all welcome. [link] [comments] |

[New Model] micro-kiki-v3 — Qwen3.5-35B-A3B + 35 domain LoRAs + router + negotiator + Aeon memory for embedded engineering

Reddit r/LocalLLaMA / 4/18/2026

📰 NewsDeveloper Stack & InfrastructureTools & Practical UsageIndustry & Market MovesModels & Research

Key Points

- micro-kiki-v3 is a newly released local-first model on Hugging Face that combines Qwen3.5-35B-A3B with 35 domain-specific LoRAs plus a router, negotiator, and Aeon memory aimed at embedded engineering use cases.

- The architecture uses a domain router to select the top-4 LoRA stacks, applies rank-16 LoRA on q/k/v/o with top-2 routing per stack, and includes null-space projection plus a negotiator module (CAMP + Catfish) to reduce conflicts and catastrophic forgetting.

- An anti-bias layer (KnowBias + RBD) and Aeon memory (Atlas graph + trace logs) are designed to improve output safety and retain knowledge across sessions, though production Aeon use requires external backends like Qdrant or Neo4j.

- The model is distributed as GGUF Q4_K_M for llama.cpp/Ollama/LM Studio with a 262K context window, is Apache 2.0 licensed, and supports interleaved French and English.

- A dataset (also Apache 2.0) accompanies the release, featuring 489K instruction-following examples built from Claude CLI sessions during embedded consulting, Codex/Copilot traces, and multiple filtered open HF datasets, but the release notes that no external reproducible benchmarks are available yet.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

Black Hat USA

AI Business

Black Hat Asia

AI Business

The myth of Claude Mythos crumbles as small open models hunt the same cybersecurity bugs Anthropic showcased

THE DECODER

Claude Opus 4.7 vs 4.6: What Actually Changed and What Breaks on Migration

Dev.to

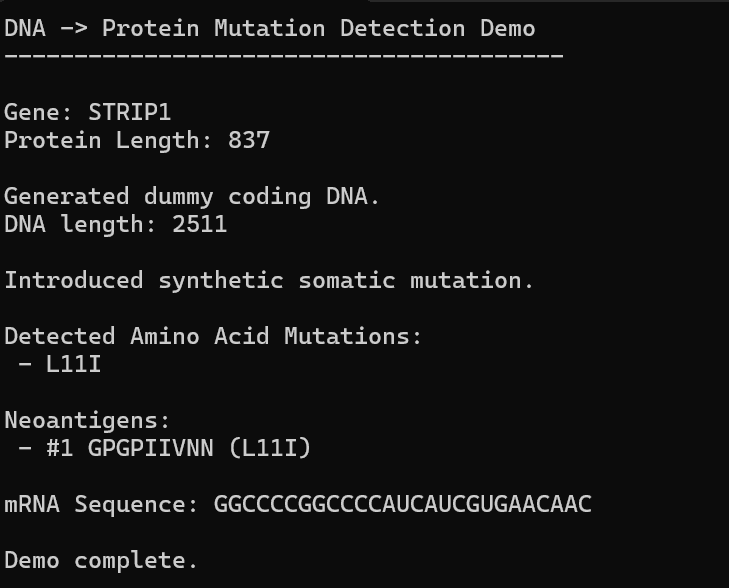

AI, Hope, and Healing: Can We Build Our Own Personalized mRNA Cancer Vaccine Pipeline?

Dev.to