Representative Spectral Correlation Network for Multi-source Remote Sensing Image Classification

arXiv cs.CV / 5/1/2026

💬 OpinionDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces RSCNet, a multi-source remote sensing image classification framework that fuses hyperspectral imagery with SAR/LiDAR data while addressing spectral redundancy and cross-source heterogeneity.

- It proposes a Key Band Selection Module (KBSM) that adaptively selects task-relevant HSI bands using guidance from other sources, reducing redundancy and avoiding the information loss common in PCA-style spectral reduction.

- It adds a Cross-source Adaptive Fusion Module (CAFM) that uses cross-source attention weighting plus local-global contextual refinement to improve feature interaction between modalities.

- Experiments on three public benchmarks show RSCNet outperforms existing state-of-the-art methods while keeping computational complexity substantially lower, and the authors provide public code.

Related Articles

The foundational UK sovereign-AI patents are filed. The collaboration door is open.

Dev.to

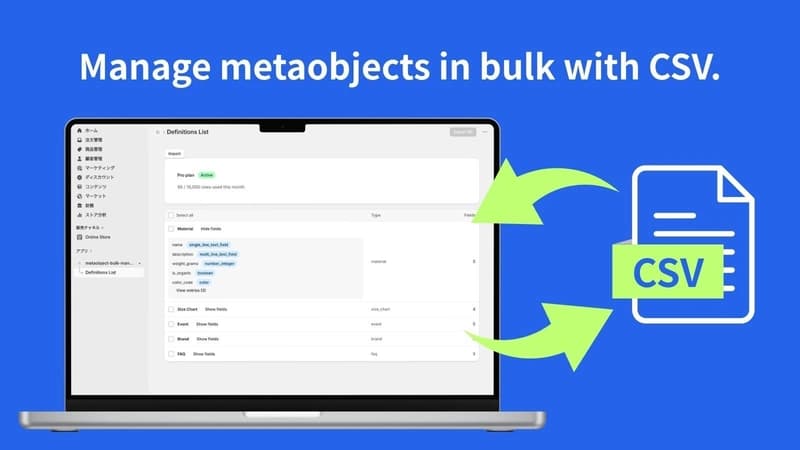

Building a Shopify app with Claude Code — spec-driven development and pricing design

Dev.to

From Chaos to Clarity: AI-Powered Client Portals for Designers

Dev.to

Stuck in the Mud (and Loops!) - Kiwi-chan Devlog #7

Dev.to

Addition is All You Need for Energy-efficient Language Models

Dev.to