| I've been running workloads that I typically only trust Opus and Codex with, and I can confirm 3.6 is really capable. Of course, it's not at the level of those models, but it's definitely crossing the barrier of usefulness, plus the speed is amazing running this on an M5 Max 128GB 8bit 3K PP, 100 TG on oMLX + Pi.dev Just ensure you have `preserve_thinking` turned on. Check out details here. [link] [comments] |

qwen3.6 performance jump is real, just make sure you have it properly configured

Reddit r/LocalLLaMA / 4/18/2026

💬 OpinionSignals & Early TrendsTools & Practical UsageModels & Research

Key Points

- A Reddit user reports that Qwen 3.6 shows a noticeable real-world performance jump on workloads they usually only trust Opus and Codex with.

- They emphasize that while Qwen 3.6 is not at the exact level of those models, it has crossed the threshold of practical usefulness.

- The user highlights impressive inference speed when running on an M5 Max 128GB with an 8-bit 3K setup and specifically mentions using oMLX and Pi.dev for performance.

- They attribute part of the improvement to correct configuration, recommending that `preserve_thinking` be turned on.

Related Articles

Black Hat USA

AI Business

Black Hat Asia

AI Business

The myth of Claude Mythos crumbles as small open models hunt the same cybersecurity bugs Anthropic showcased

THE DECODER

Claude Opus 4.7 vs 4.6: What Actually Changed and What Breaks on Migration

Dev.to

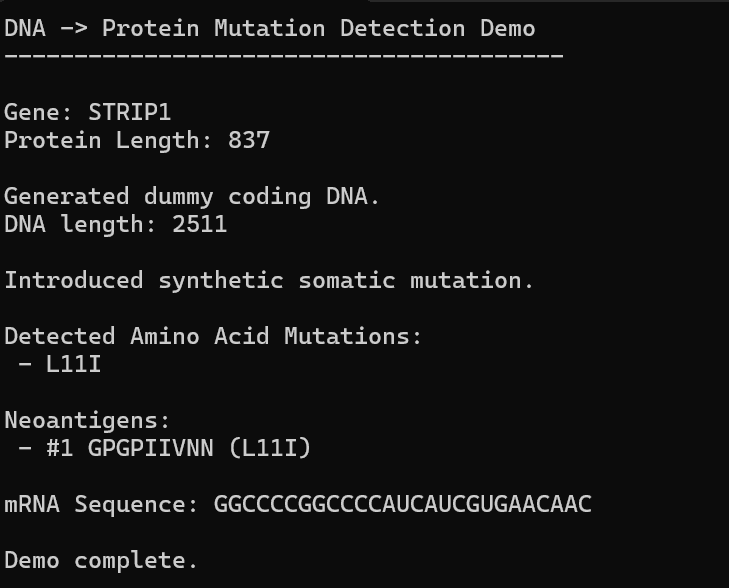

AI, Hope, and Healing: Can We Build Our Own Personalized mRNA Cancer Vaccine Pipeline?

Dev.to