AIMER: Calibration-Free Task-Agnostic MoE Pruning

arXiv cs.LG / 3/20/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- AIMER introduces a calibration-free criterion for ranking experts in Mixture-of-Experts language models to enable pruning without calibration.

- It defines AIMER (Absolute mean over root mean square Importance for Expert Ranking) to yield clear within-layer score separation and distinct expert stratification.

- Across 7B to 30B MoE models and 25% and 50% pruning ratios, it delivers competitive or stronger performance versus calibration-based baselines on 16 benchmarks.

- Scoring the experts requires only 0.22–1.27 seconds, enabling efficient deployment by reducing memory and serving overhead.

Related Articles

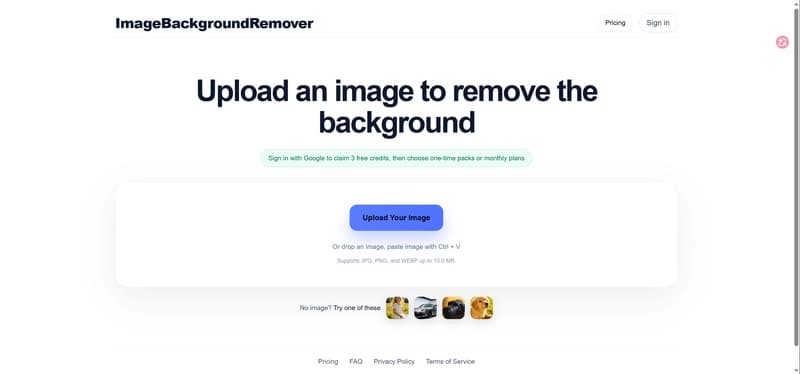

I built an online background remover and learned a lot from launching it

Dev.to

ShieldCortex: What We Learned Protecting AI Agent Memory

Dev.to

Why Your SaaS Needs AI Chat in 2026 (Add It in 40 Lines)

Dev.to

[D] Matryoshka Representation Learning

Reddit r/MachineLearning

Advancing Open Source AI, NVIDIA Donates Dynamic Resource Allocation Driver for GPUs to Kubernetes Community

Nvidia AI Blog