DocPrune:Efficient Document Question Answering via Background, Question, and Comprehension-aware Token Pruning

arXiv cs.CV / 4/27/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- DocPrune(アーキテクチャ学習不要・逐次型の文書トークン枝刈り)を提案し、長文書のドキュメントQAを効率化する方針を示しています。

- 文書画像特有の構造的スパース性(大きな背景に対して根拠は点在)を活かし、背景や質問に無関係なトークンなど不要トークンを除去します。

- モデルの理解度に応じて、どの層から枝刈りを開始するかを自動で選択することで、性能劣化を抑えつつ最適な削減を実現します。

- M3DocRAGでの実験では、エンコーダでスループット3.0倍、デコーダで3.3倍の向上に加え、F1スコアを+1.0改善し、追加学習なしで精度と効率の両立を達成したと報告しています。

Related Articles

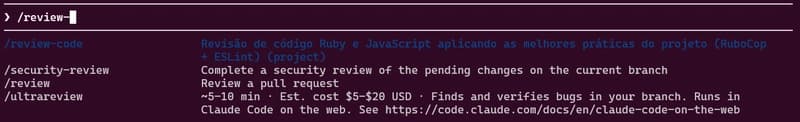

how to use skills from Claude Code A.K.A Claudinho.

Dev.to

Behind the Scenes of a Self-Evolving AI: The Architecture of Tian AI

Dev.to

Meet Tian AI: Your Completely Offline AI Assistant for Android

Dev.to

UK to develop AI hardware plan

Tech.eu

Copilot Cowork | The Control Plane for Long-Running AI Work | A Rahsi Framework™

Dev.to