![Charting the AI Perception Gap: Across 71 scenarios, AI experts (N=119) and the public (N=1100) have differing views on the risks, benefits, and value of AI. More importantly, AI experts discount the influence of risks stronger than the public does when forming their value judgments [R]](https://preview.redd.it/evw6ah88kczg1.png?width=140&height=80&auto=webp&s=b00e0ee1e002421a3e4db9a4c43354a9606d4f54) | Abstract: Artificial intelligence (AI) is reshaping society, raising questions about trust, risks, and the asymmetries between public and academic perspectives. We examine how the German public (N = 1,110), comprising individuals who interact with or are affected by AI, and academic AI experts (N = 119, mainly from Germany), who contribute to research, educate practitioners, and inform policymaking, construct mental models of AI’s capabilities and impacts across 71 scenarios. These scenarios span diverse domains (including sustainability, healthcare, employment, inequality, art, and warfare) and were evaluated across four dimensions using the psychometric model: likelihood, perceived risk, perceived benefit, and overall value. Across scenarios, academic experts generally anticipated higher probabilities of occurrence, perceived lower risks, and reported greater benefits than the public, while also expressing more positive overall evaluations of AI. Beyond differences in absolute assessments, the two groups exhibited systematically different evaluative patterns: experts’ value judgments were driven primarily by perceived benefits, whereas public evaluations placed more weight on perceived risks, reflecting distinct risk–benefit trade-offs. Visual mappings indicate convergent domains (e.g., medical diagnoses and criminal use) and tension points (e.g., justice and political decision-making) that may warrant targeted communication or policy attention. While this study does not assess AI systems or design practices directly, the observed divergence in mental models suggests that the research, implementation, and use of AI may inadvertently neglect the risk-related priorities of the public. Such biases in research and implementation may yield “procrustean AI”—systems insufficiently aligned with the needs of the affected public (akin to the Bed of Procrustes). We address the socio-technical challenge of expert-centric governance and advocate for participatory practices. Full article: https://link.springer.com/article/10.1007/s00146-026-03023-8 [link] [comments] |

Charting the AI Perception Gap: Across 71 scenarios, AI experts (N=119) and the public (N=1100) have differing views on the risks, benefits, and value of AI. More importantly, AI experts discount the influence of risks stronger than the public does when forming their value judgments [R]

Reddit r/MachineLearning / 5/6/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- A study compared German members of the public (N=1,110) and academic AI experts (N=119) across 71 AI-related scenarios to measure how each group perceives likelihood, risk, benefits, and overall value.

- Experts tended to assign higher probabilities and lower perceived risks, and they reported greater benefits and more positive overall evaluations of AI than the public.

- Beyond differences in absolute ratings, the groups used different risk–benefit trade-offs: experts’ overall value judgments were driven more by perceived benefits, while the public weighed perceived risks more heavily.

- The findings highlight specific domains where views converge (e.g., medical diagnosis and criminal use) and where they diverge most (e.g., justice and political decision-making), suggesting a need for targeted communication and policy attention.

- The authors argue that these mismatched mental models may lead to “procrustean AI,” where research, implementation, and deployment overlook the public’s risk-related priorities.

Related Articles

Transform Your Blurry Photos into HD Masterpieces, Instantly!

Dev.to

6 New Moats for AI Agent Infrastructure — Trust Score, Deployment, SLA, Identity, Compliance-as-Code

Dev.to

There will still be art in software

Dev.to

Google Home’s Gemini AI can handle more complicated requests

The Verge

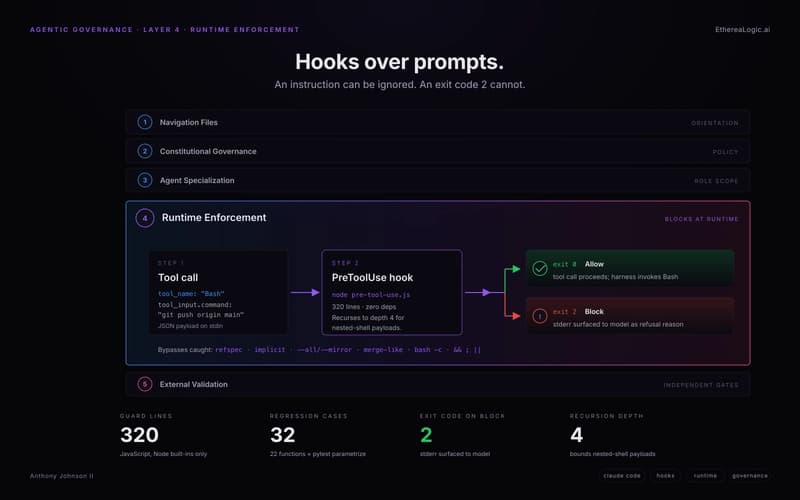

Exit Code 2: How Claude Hooks Turn Agentic Rules Into Runtime Barriers

Dev.to