TurboQuant was teased recently and tens of billions gone from memory chip market in 48 hours but anyone in this community who read the paper would have seen the problem with the panic immediately.

TurboQuant compresses the KV cache down to 3 bits per value from the standard 16 using polar coordinate quantization. But the KV cache is inference memory. Training memory, activations, gradients, optimizer states, is a completely different thing and completely untouched. And majority of HBM demand comes from training. An inference compression paper doesn't move that number.

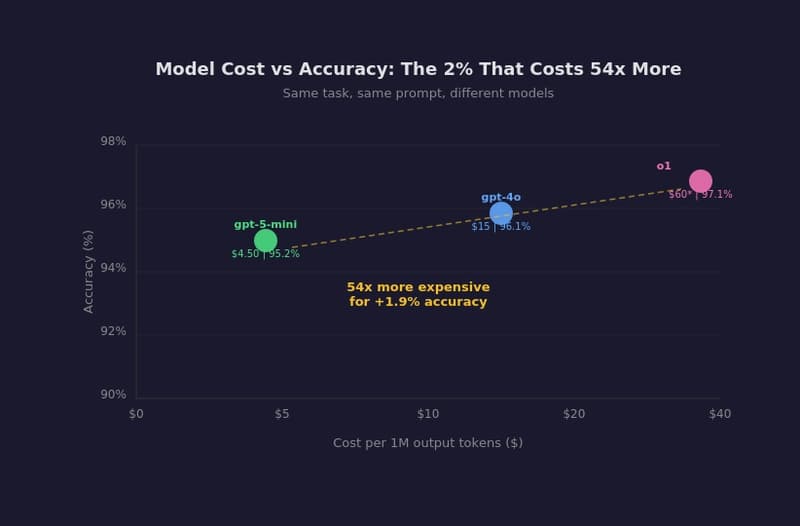

And the commercial inference baseline already runs at 4 to 8 bit precision. The 6x headline is benchmarked against 16 bit full precision. The real marginal gain over what's actually deployed is considerably smaller than that number suggests.

Also the biggest thing: the paper has also been sitting since 2025. Even google hasn't deployed it widely in the year since the math was first documented.

This is now the second time in 14 months the market has panic-sold memory stocks over an AI efficiency paper. DeepSeek was the first. I believe both times the thesis is wrong and it’s just panic.

I have written a full-breakdown of the thing if someone wants to read it.

[link] [comments]