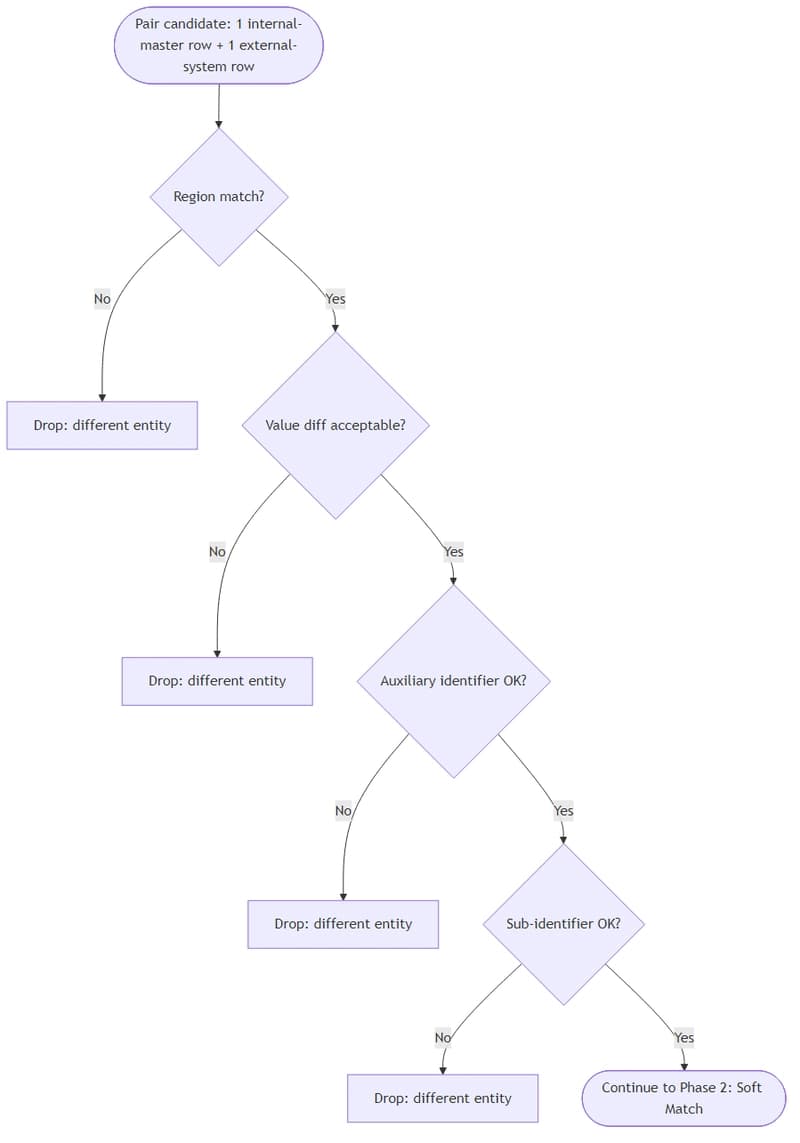

Figure 1 of DSV4 paper seems to imply that DSV3.2 uses ~50GB at 1m context and DSV4 uses

~5GB:

https://huggingface.co/deepseek-ai/DeepSeek-V4-Pro/blob/main/DeepSeek_V4.pdf

From my own calculations, the correct FP16 KV cache at 1m context should be:

| Model | Params | 128k | 160k | 1m | KV% |

|---|---|---|---|---|---|

| V3.x | 671B | 8.58GiB | 10.72GiB | 68.63GiB | 5.11% |

| V4 Flash | 284B | 0.76GiB | 0.95GiB | 6.08GiB | 1.07% |

| V4 Pro | 1600B | 1.09GiB | 1.36GiB | 8.71GiB | 0.272% |

So while KV cache saving is not 9.5x but 7.879x. It is still very impressive. If you look at the KV% metric, then we are seeing close to 20x gain. This basically obliterates all current transformer-SSM hybrid models' KV cache usage. But the transformer-SSM crowd can just use DSV4's CSA and HCA on their transformer layers to catch up.

At this KV cache usage, that also means when DSV4 is supported at llama.cpp, we can easily run 1m context for DSV4 Flash on 256GB RAM and 3090 or for DSV4 Pro on 1.5TB RAM and RTX 6000 Blackwell. I suppose the various speed gain mentioned in the paper can make this viable.

While DSV4 Pro doesn't do well at artificial analysis. We can expect Kimi and Zhipu will make derivatives off it such that we have a beast that uses very little KV cache.

All in all, DS is still doing very well as the research backbone of the Chinese AI scene.

PS More detailed calculations for people interested. Please let me know if I did any math wrong:

Based on what I see by actually running V3.2 with llama.cpp, the actual FP16 KV cache usage for DSV3.2 is 10.72GiB at 160k context and 68.625GiB at hypothetical 1m context.

This number can be validated with the per token per layer MLA KV cache formula:(kv_lora_rank + qk_rope_head_dim) * precision = (512 + 64) * 2 = 1152 bytes. So for 61 layers and 1m token, it will be 1152*61*1024*1024 = 68.625GiB which is not 50GB.

On the other hand, for DSV4 Pro, it has 30 CSA layers and 31 HCA layers interleaved. My understanding is that CSA only stores 1/4 of MLA KV cache, so per token per layer is 288 bytes and HCA only stores 1/128 of MLA KV cache, so per token per layer is 9 bytes. Therefore, KV cache at (288*30+9*31)*1024*1024 =~ 8.70996GiB. So KV cache saving is 7.879x not 9.5x.

For DSV4 Flash, the first two layers are Sliding Window Attention with a window size of 128 tokens. Normally, for these two layers, the per layer KV cache for any length longer than 128 should be 2*n_head_kv*head_dim*precision*window = 2*1*128*2*128 = 65536 bytes. The current llama.cpp implementation adds 256 byes to the window for better batching, it becomes 2*1*128*2*(128+256) = 196608 bytes.

There are 21 CSA layers and 20 HCA layers for DSV4 Flash, so the KV cache at 1m context is (288*21+9*20)*1024*1024+2*196608 = 6.0824GiB. This is 11.3x saving compare to DSV3.2 not 13.7x as claimed.

[link] [comments]