Differentiable Power-Flow Optimization

arXiv cs.AI / 3/31/2026

📰 NewsIdeas & Deep AnalysisTools & Practical UsageModels & Research

Key Points

- The paper introduces Differentiable Power-Flow (DPF), a reformulation of the AC power-flow problem into a differentiable simulation that supports end-to-end gradient propagation from power mismatches to model parameters.

- DPF aims to address scalability limits of conventional Newton-Raphson AC power-flow methods while improving over purely data-driven surrogates that may lack physical constraint guarantees.

- The approach is designed to be efficiently computed using GPU acceleration, sparse tensor representations, and batching features in frameworks like PyTorch, providing a scalable alternative to NR.

- The authors highlight application fit for time-series analysis through reuse of previous solutions, for N-1 contingency analysis via batched processing, and for fast screening via speed plus early stopping.

- The work is published as an arXiv announcement and includes a link to the authors’ code repository for adoption and experimentation.

Related Articles

Black Hat Asia

AI Business

How to Verify Information Online and Avoid Fake Content

Dev.to

I built an AI code reviewer solo while working full-time — honest post-launch breakdown

Dev.to

Mobile App MVP: Build, Launch, and Validate in Under a Week

Dev.to

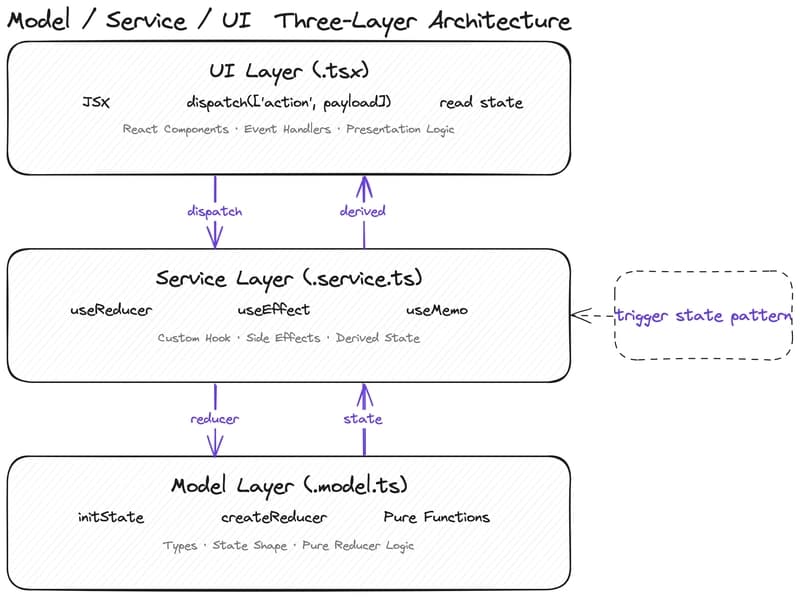

Why Your State Management Is Slowing Down AI-Assisted Development

Dev.to