A unifying view of contrastive learning, importance sampling, and bridge sampling for energy-based models

arXiv stat.ML / 4/10/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper proposes a unified framework for training and parameter estimation in energy-based models (EBMs) by linking noise contrastive estimation (NCE), reverse logistic regression (RLR), multiple importance sampling (MIS), and bridge sampling under a common perspective.

- It shows that these seemingly different estimators can be equivalent when certain conditions hold, clarifying how existing EBM inference methods relate to one another.

- The authors use this unifying view to explain why NCE is often flexible and robust, while also outlining specific scenarios where its performance can be improved.

- Beyond synthesizing prior methods, the work introduces the potential for new estimators and aims to improve both statistical efficiency and computational efficiency.

- Reproducibility is supported by releasing the MATLAB code used in the numerical experiments.

Related Articles

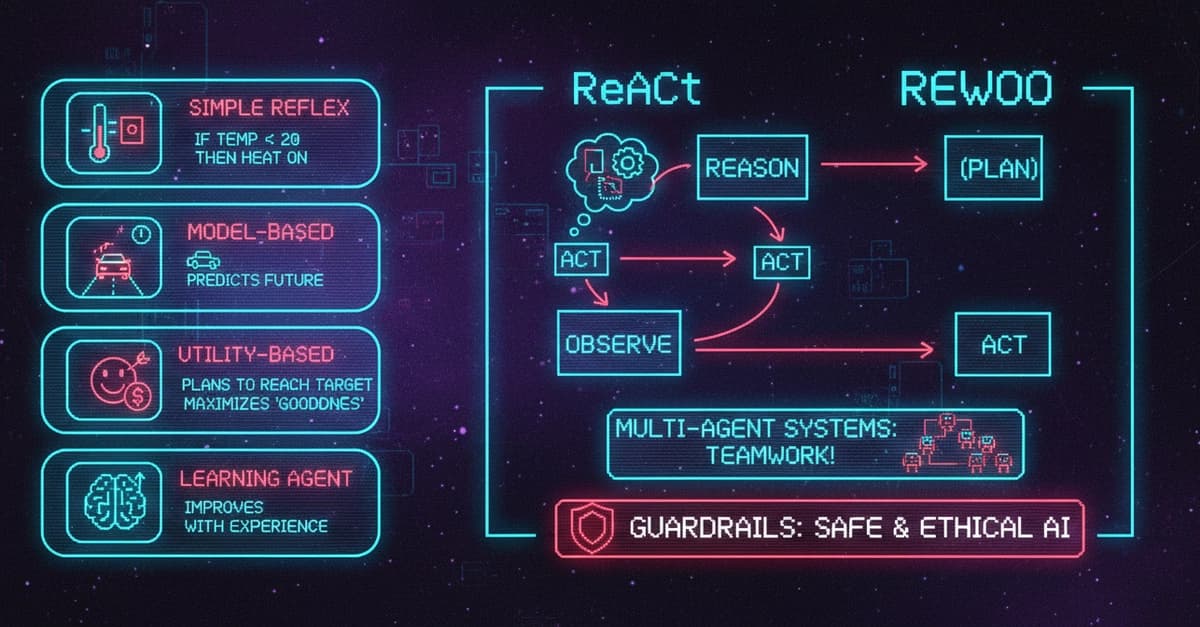

AI Agents Explained: 5 Types, Components, Frameworks, and Real-World Use Cases

Dev.to

Edge-to-Cloud Swarm Coordination for circular manufacturing supply chains with embodied agent feedback loops

Dev.to

Why QIS Is Not a Sync Problem: The Mailbox Model for Distributed Intelligence

Dev.to

The Ethics of AI: A Developer's Responsibility

Dev.to

Graph2Seq: Graph to Sequence Learning with Attention-based Neural Networks

Dev.to