Counteractive RL: Rethinking Core Principles for Efficient and Scalable Deep Reinforcement Learning

arXiv cs.LG / 3/18/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces Counteractive RL, a novel paradigm that uses counteractive actions to improve learning efficiency in high-dimensional MDPs.

- It provides a theoretically-founded basis for efficient, scalable, and accelerated learning with zero additional computational complexity.

- It reports extensive experiments in the Arcade Learning Environment showing significant performance gains and sample efficiency in high-dimensional state representations.

- It addresses the challenge of exponential state-space growth by reframing the interaction with the environment during learning to enable faster policy optimization.

Related Articles

MCP Is Quietly Replacing APIs — And Most Developers Haven't Noticed Yet

Dev.to

I Built a Self-Healing AI Trading Bot That Learns From Every Failure

Dev.to

Stop Guessing Your API Costs: Track LLM Tokens in Real Time

Dev.to

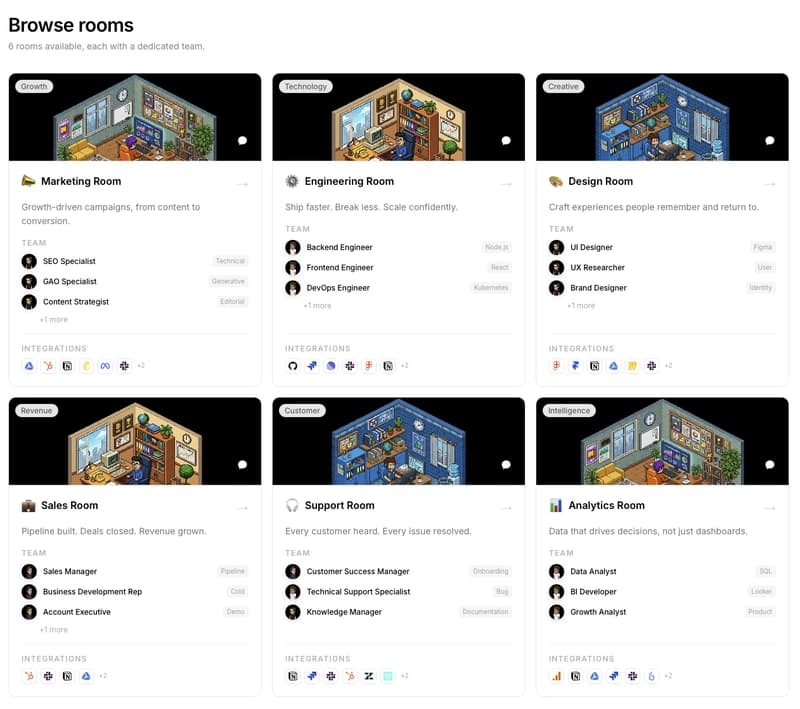

We are building PixelRooms! The marketplace of AI teams for thepixeloffice.ai

Dev.to

Every real estate agent tool worth your time in 2026, ranked and rated

Dev.to