CRISP: Compressing Redundancy in Chain-of-Thought via Intrinsic Saliency Pruning

arXiv cs.CL / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces CRISP, a framework that compresses long chain-of-thought (CoT) reasoning by leveraging the model’s intrinsic saliency rather than external compressors.

- It finds that a reasoning termination token (denoted in the paper as [object Object]) serves as an “information anchor,” with attention patterns that separate essential reasoning from redundant parts.

- CRISP uses these internal attention signals to guide fine-grained (“atomic”) compression operations, aiming to keep logical coherence while increasing information density.

- Experiments across multiple backbone models and mathematical datasets show CRISP reduces token counts by 50–60% without sacrificing accuracy, addressing computational overhead and latency in long-context reasoning.

- The authors open-source their implementation to support further research on efficient reasoning methods.

Related Articles

Agent Package Manager (APM): A DevOps Guide to Reproducible AI Agents

Dev.to

3 Things I Learned Benchmarking Claude, GPT-4o, and Gemini on Real Dev Work

Dev.to

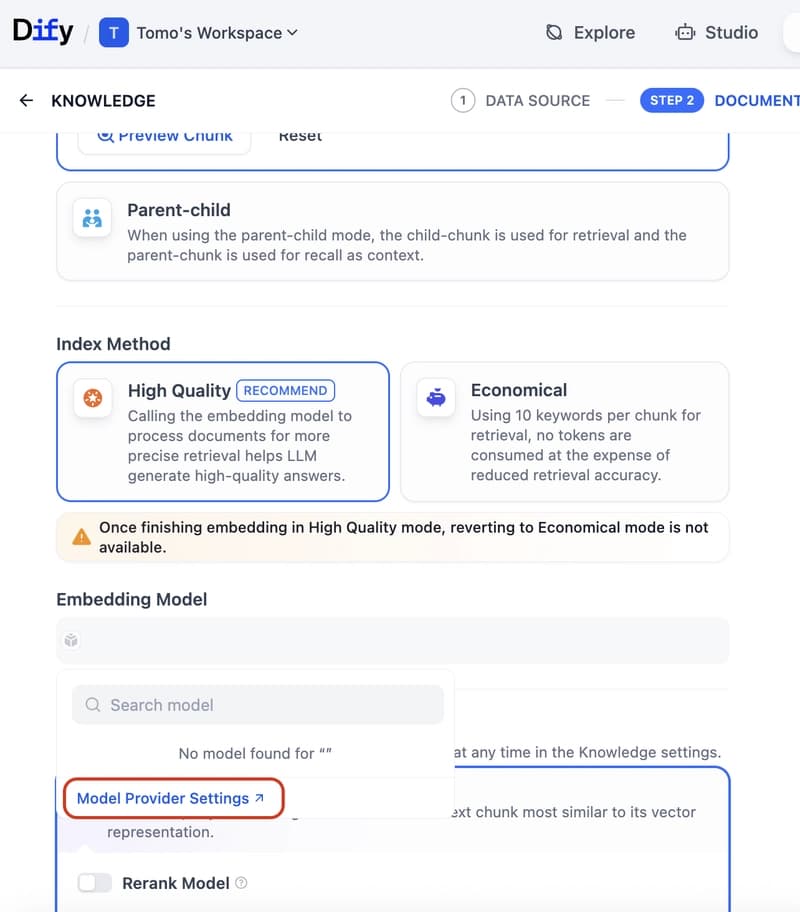

Dify Now Supports IRIS as a Vector Store — Setup Guide

Dev.to

How to build a Claude chatbot with streaming responses in under 50 lines of Node.js

Dev.to

Open Source Contributors Needed for Skillware & Rooms (AI/ML/Python)

Dev.to