6th April 2026 - Link Blog

Google AI Edge Gallery (via) Terrible name, really great app: this is Google's official app for running their Gemma 4 models (the E2B and E4B sizes, plus some members of the Gemma 3 family) directly on your iPhone.

It works really well. The E2B model is a 2.54GB download and is both fast and genuinely useful.

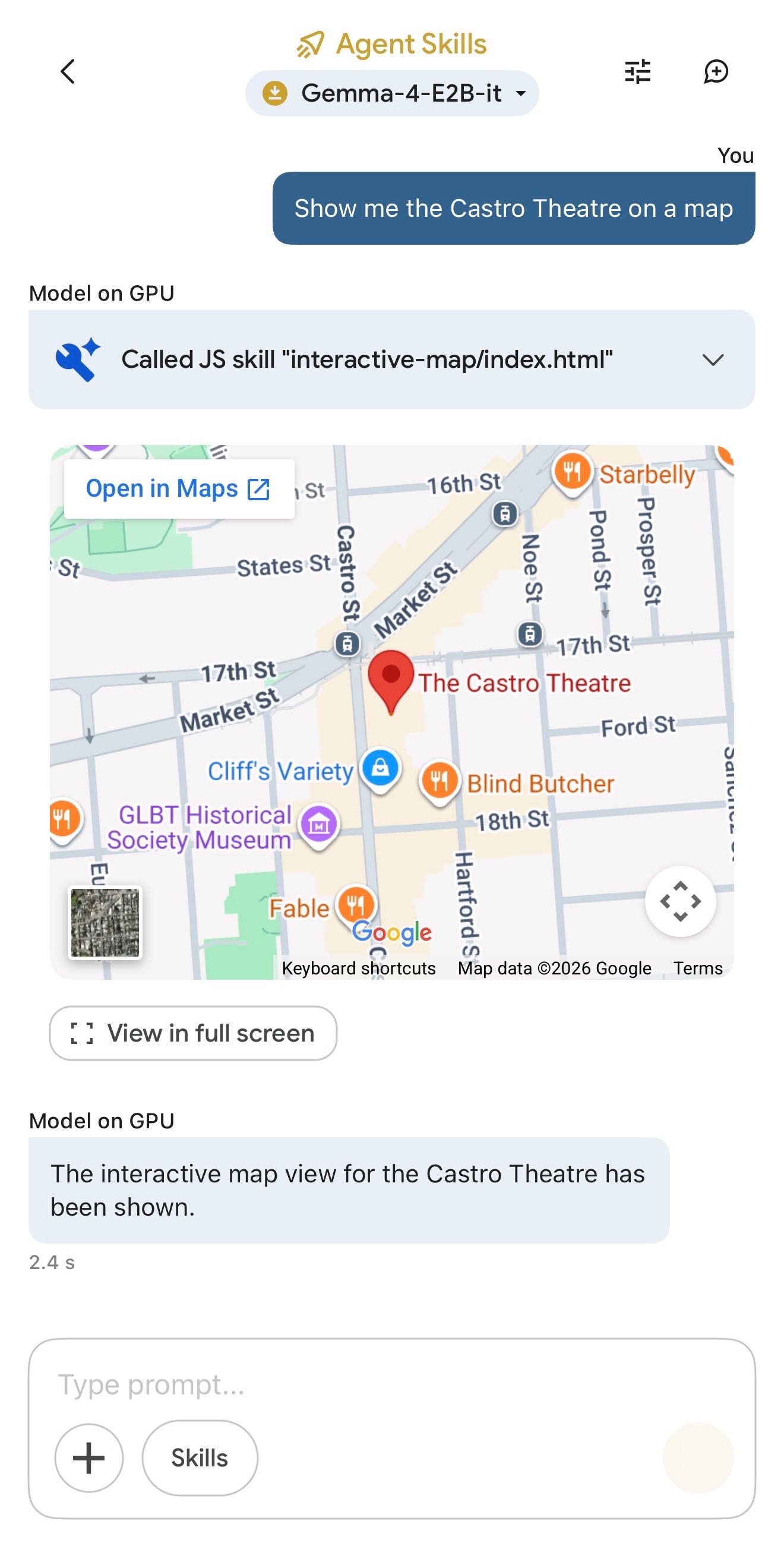

The app also provides "ask questions about images" and audio transcription (up to 30s) with the two small Gemma 4 models, and has an interesting "skills" demo which demonstrates tool calling against eight different interactive widgets, each implemented as an HTML page (though sadly the source code is not visible): interactive-map, kitchen-adventure, calculate-hash, text-spinner, mood-tracker, mnemonic-password, query-wikipedia, and qr-code.

(That demo did freeze the app when I tried to add a follow-up prompt though.)

This is the first time I've seen a local model vendor release an official app for trying out their models on in iPhone. Sadly it's missing permanent logs - conversations with this app are ephemeral.

Recent articles

- The Axios supply chain attack used individually targeted social engineering - 3rd April 2026

- Highlights from my conversation about agentic engineering on Lenny's Podcast - 2nd April 2026

- Mr. Chatterbox is a (weak) Victorian-era ethically trained model you can run on your own computer - 30th March 2026

This is a link post by Simon Willison, posted on 6th April 2026.

google 401 iphone 63 ai 1950 generative-ai 1731 local-llms 153 llms 1698 gemini 182 llm-tool-use 66Monthly briefing

Sponsor me for $10/month and get a curated email digest of the month's most important LLM developments.

Pay me to send you less!

Sponsor & subscribe