AcceRL: A Distributed Asynchronous Reinforcement Learning and World Model Framework for Vision-Language-Action Models

arXiv cs.LG / 3/20/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- AcceRL proposes a fully asynchronous and decoupled RL framework that separates training, inference, and rollouts to remove synchronization bottlenecks in Vision-Language-Action models.

- It is the first to integrate a plug-and-play, trainable world model into a distributed asynchronous RL pipeline to generate virtual experiences.

- Experiments on the LIBERO benchmark show that AcceRL achieves state-of-the-art performance.

- The framework exhibits super-linear scaling in throughput and highly efficient hardware utilization.

- The world-model-augmented variant delivers unprecedented sample efficiency and robust training stability in complex control tasks.

Related Articles

Interactive Web Visualization of GPT-2

Reddit r/artificial

From infrastructure to AI: how Alibaba Cloud powers the global ambitions of Chinese companies

SCMP Tech

[R] Causal self-attention as a probabilistic model over embeddings

Reddit r/MachineLearning

The 5 software development trends that actually matter in 2026 (and what they mean for your startup)

Dev.to

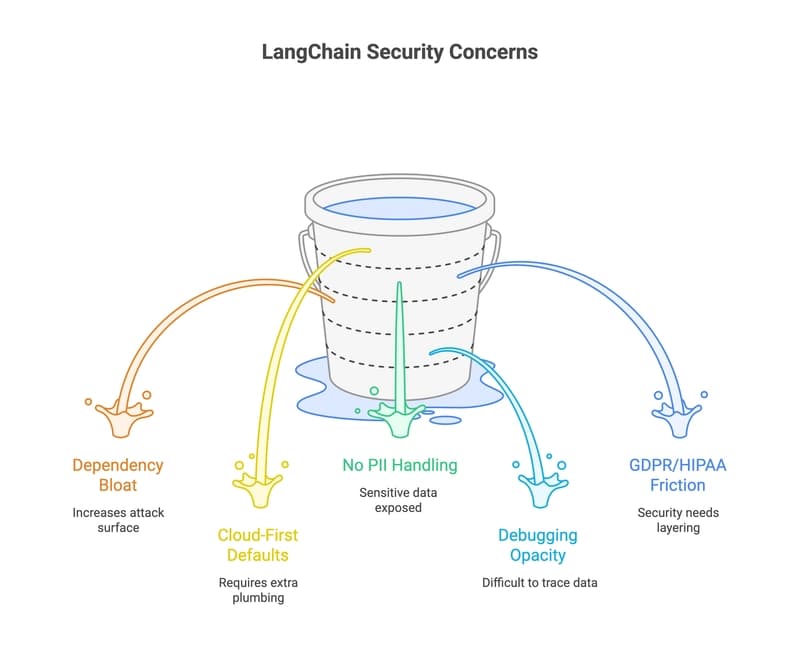

33 LangChain Alternatives That Won't Leak Your Data (2026 Guide)

Dev.to