submitted by /u/tekz

[link] [comments]

Anthropic researchers detail “model spec midtraining”, which adds a stage between pretraining and fine-tuning to improve generalization from alignment training

Reddit r/artificial / 5/7/2026

💬 OpinionModels & Research

Key Points

- Anthropic researchers propose “model spec midtraining,” a training stage inserted between pretraining and fine-tuning to better improve generalization.

- The approach aims to make alignment training transfer more effectively to new or unseen situations, reducing overfitting to alignment objectives.

- The method is described as a structural change to the training pipeline rather than a single new algorithm or tool.

- By leveraging this additional midtraining phase, the work suggests alignment-tuned behavior can remain more robust across different contexts.

Related Articles

Decoupled DiLoCo: A new frontier for resilient, distributed AI training

Dev.to

Are You Still Coding — or Just an AI Manager Now?

Dev.to

Aurora’s Chris Urmson on why self-driving trucks are finally ready to scale

TechCrunch

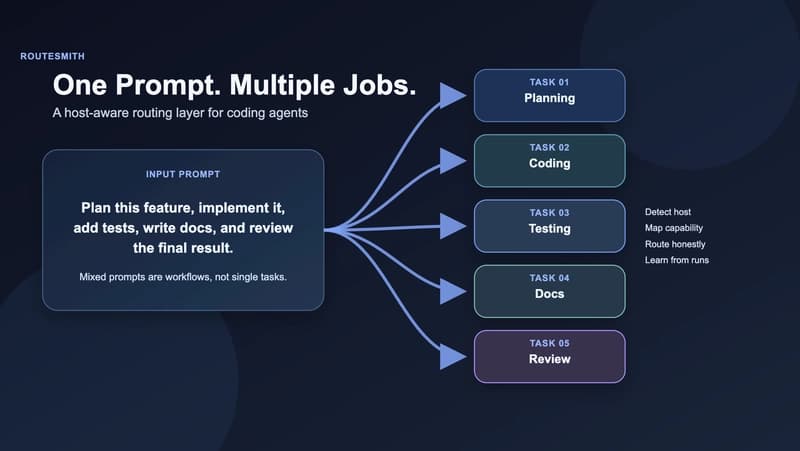

Stop Treating Mixed Prompts Like One Task: Why I Built RouteSmith

Dev.to

Your Blockchain Can't Tell What's an AI

Dev.to