Decision Boundary-aware Generation for Long-tailed Learning

arXiv cs.CV / 5/5/2026

📰 NewsModels & Research

Key Points

- Long-tailed learning suffers because decision boundaries are biased toward head classes, which reduces accuracy for tail classes.

- Prior diffusion-based generative augmentation and head-to-tail transfer can partially rebalance the decision space, but they may also cause latent non-local feature mixing, leading to boundary overlap and shifted tail-class distributions.

- The paper identifies “boundary ambiguity” as a key failure mode and introduces a Decision Boundary-aware Generation (DBG) framework that generates informative samples near decision boundaries.

- Experiments on standard long-tailed benchmarks show that DBG improves both tail-class and overall accuracy, while reducing inter-class overlap compared with existing approaches.

- The authors provide an implementation of DBG on GitHub for reproducibility and further research use.

Related Articles

Singapore's Fraud Frontier: Why AI Scam Detection Demands Regulatory Precision

Dev.to

From OOM to 262K Context: Running Qwen3-Coder 30B Locally on 8GB VRAM

Dev.to

Nano Banana Pro vs DALL-E 3 vs Midjourney: A Practical Comparison From Someone Who Actually Uses All Three

Dev.to

LLMs edited 86 human essays toward a semantic cluster not occupied by any human writer [D]

Reddit r/MachineLearning

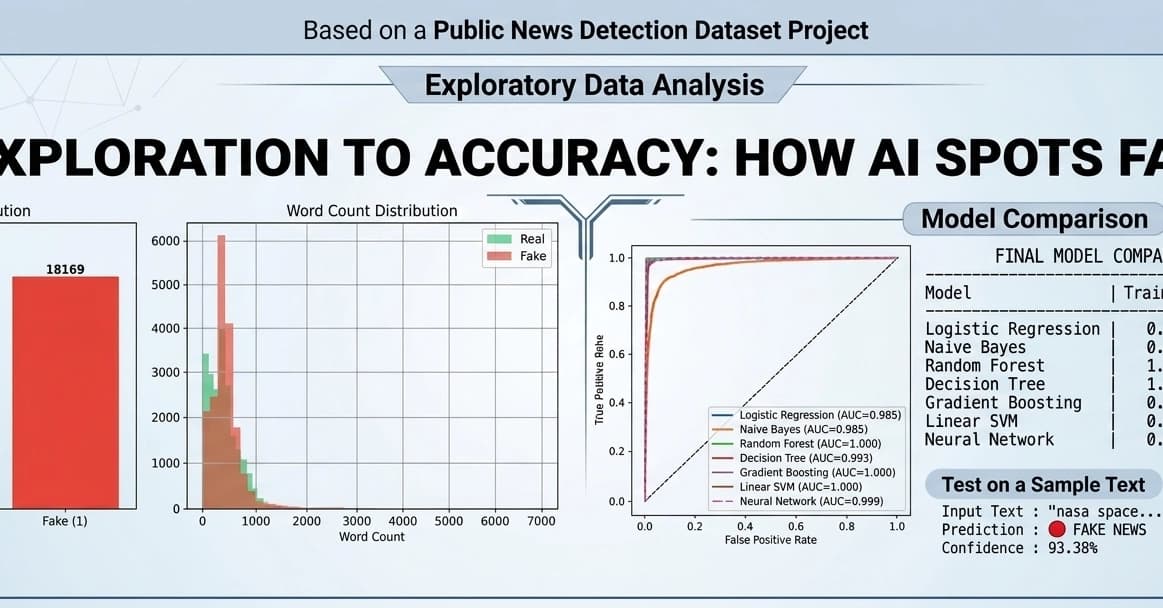

Fake News Detection using Machine Learning & NLP!

Dev.to