PilotBench: A Benchmark for General Aviation Agents with Safety Constraints

arXiv cs.AI / 4/13/2026

📰 NewsSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- PilotBench is introduced as a new benchmark to test whether LLM-based agents can predict safety-critical flight trajectory and attitude while respecting explicit safety constraints.

- The benchmark is built from 708 real-world general aviation trajectories across nine distinct flight phases, using synchronized 34-channel telemetry to evaluate both semantic reasoning and physics-governed prediction.

- A new composite metric, Pilot-Score, combines 60% regression accuracy with 40% instruction adherence and safety compliance to measure performance in a balanced way.

- Across 41 evaluated models, traditional forecasters show better numeric precision (lower MAE), while LLMs demonstrate higher instruction-following/controllability but with a precision tradeoff, revealing a “Precision-Controllability Dichotomy.”

- Phase-stratified results show LLM performance degrades sharply in high-workload phases (e.g., Climb and Approach), motivating hybrid systems that pair LLM symbolic reasoning with specialized numerical forecasters.

Related Articles

Small NSFW model for chatbot

Reddit r/LocalLLaMA

ChatGPT for Nurses: Prompts That Help You Document, Communicate, and Study

Dev.to

I Added a Stopwatch to My AI in 1 LOC Using the Livingrimoire While Corporations Need a Year

Dev.to

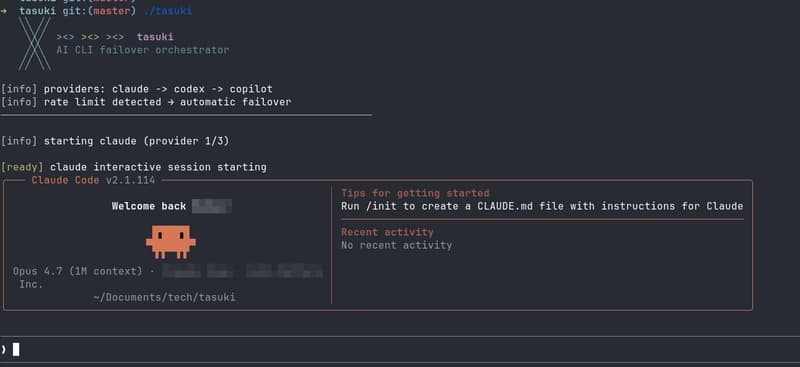

Built tasuki — an AI CLI Orchestrator that Seamlessly Hands Off Between Tools

Dev.to

I built a GNOME extension for Codex with local/remote history, live filters, Markdown export, and a read-only MCP server

Reddit r/artificial