Event-Adaptive State Transition and Gated Fusion for RGB-Event Object Tracking

arXiv cs.AI / 4/16/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper argues that current RGB-Event (RGBE) object tracking models built on Vision Mamba use fixed state-transition matrices that do not adapt to fluctuations in event sparsity, hurting cross-modal fusion robustness.

- It introduces MambaTrack, a multimodal tracking framework based on a Dynamic State Space Model (DSSM) with an event-adaptive state transition mechanism that modulates transition behavior according to event stream density.

- The framework includes a Gated Projection Fusion (GPF) module that projects RGB features into the event feature space and uses gates derived from event density and RGB confidence to control fusion strength.

- Experiments report state-of-the-art results on the FE108 and FELT datasets, and the authors claim the lightweight design could support real-time embedded deployment.

Related Articles

The AI Hype Cycle Is Lying to You About What to Learn

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Inside NVIDIA’s $2B Marvell Deal: What NVLink Fusion Means for AI Ethernet Fabrics

Dev.to

Automating Your Literature Review: From PDFs to Data with AI

Dev.to

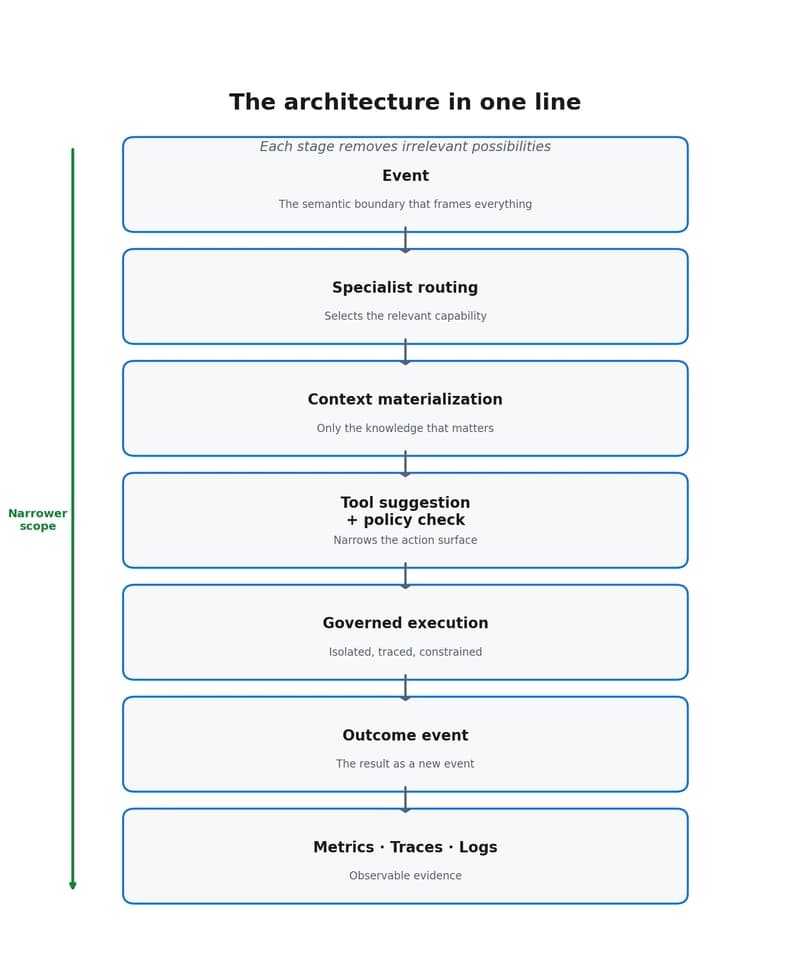

Why event-driven agents reduce scope, cost, and decision dispersion

Dev.to